Enterprise AI chatbots have quickly moved from simple support add-ons to core infrastructure for large organizations. Today, they are expected to handle complex customer interactions, connect with internal systems, and operate across multiple channels without breaking the experience.

But not all tools are built for enterprise needs. Some let you only answer basic questions, while others are designed to support complex workflows, large teams, and strict security requirements.

Below, you’ll find some of the best enterprise AI chatbot platforms available today, each built for different use cases, industries, and levels of technical complexity.

What is an enterprise AI chatbot?

An enterprise AI chatbot is an advanced conversational system built to handle high volumes of customer or employee interactions across multiple channels, while integrating deeply with business systems and workflows.

Unlike basic chatbots that follow simple scripts, enterprise AI chatbots use natural language understanding, automation, and access to real-time data to resolve complex requests.

They can answer questions, complete transactions, route conversations, and even take action inside connected systems such as CRMs, order management platforms, or internal tools.

Large organizations typically use these chatbots to support customer service, sales, IT help desks, and internal operations at scale.

What makes a chatbot “enterprise”?

Here are the key characteristics of an enterprise chatbot and what makes it different from any other bot that lives on your website.

Handles large-scale interactions: Enterprise chatbots are built to manage thousands or even millions of conversations at once, across global teams and customer bases, without performance issues.

Deep integrations with existing systems: They connect with tools like CRM platforms, contact center software, knowledge bases, and internal databases, allowing them to pull customer data, update records, and trigger workflows in real time.

Advanced AI and automation capabilities: Enterprise systems understand intent, context, and conversation history, and can automate full workflows rather than just answering questions.

Omnichannel support: They operate across multiple channels, including web chat, mobile apps, messaging platforms, and voice, ensuring consistent experiences wherever users engage.

Security and compliance: Enterprise environments require strict data protection, access control, and compliance with regulations such as GDPR or HIPAA. These chatbots are designed with those requirements in mind.

Customization and control: Teams can tailor conversation flows, business logic, and integrations to match specific operational needs, rather than relying on one-size-fits-all templates.

Human handoff and collaboration: When needed, the chatbot can transfer conversations to human agents with full context, ensuring smooth collaboration between automation and support teams.

The best enterprise AI chatbot solutions to boost customer satisfaction in 2026

If you need excellent operational efficiency, enterprise-grade security, and capable AI agents for scalable support, the market is booming with new tools being released seemingly every month. Here are some of the best enterprise AI tools to help you scale customer support without sacrificing quality.

1. Quiq – best for enterprises that want realistic AI interactions with strong contact center control

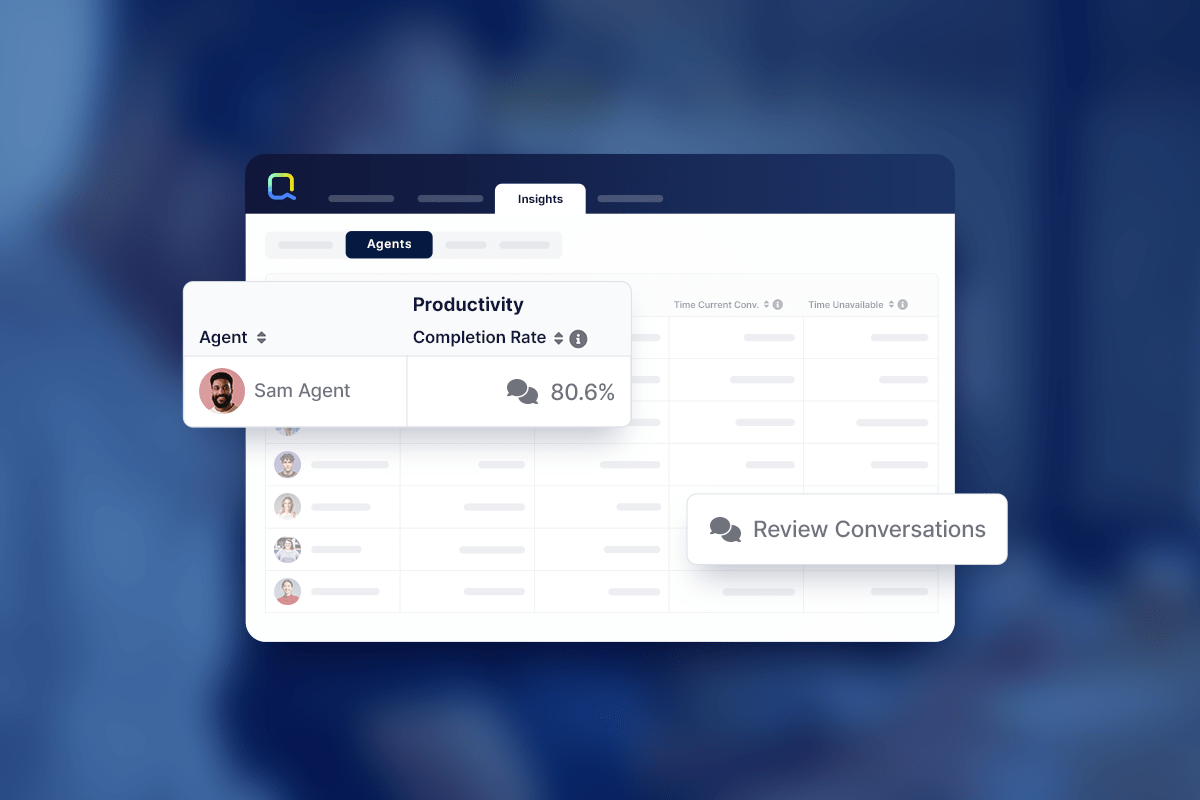

Quiq is a conversational AI and contact center platform built for enterprises that want to automate customer interactions without losing control over quality and experience.

It is popular in industries like travel, retail, financial services, and telecommunications, where support teams handle high volumes of inquiries and need reliable automation that works alongside human agents. Quiq focuses on delivering human-like responses while connecting conversations to backend systems and existing support workflows.

Key features

- Human-like responses: AI agents are designed to carry out natural conversations that feel closer to human interactions, especially in more complex support scenarios

- Omnichannel support: Manage conversations across chat, voice, messaging, and social channels within a single platform

- Support ticket integration: Connect with support tickets and existing service systems to maintain full context across interactions

- Backend systems connectivity: Integrate with CRMs, order systems, and internal tools to retrieve data and take action in real time

- Productivity tools for agents: Route conversations intelligently, assist agents during live chats, and boost productivity across support teams

Quiq still requires thoughtful setup, especially when defining workflows, integrations, and conversation logic across different channels. Enterprises may need time to align internal teams and systems before getting the most out of the platform.

That said, once implemented properly, Quiq gives teams a strong balance between automation and control, making it a reliable option for companies that want to scale support without sacrificing the quality of customer interactions.

Book a free demo with our team to find out what Quiq can do for your business.

2. Kore.ai – best for enterprises automating both customer and employee interactions across departments

Kore.ai is an enterprise conversational platform used to build virtual assistants for customer service, employee support, and internal operations.

It is commonly used in banking, healthcare, retail, and telecom, where organizations need to automate interactions across multiple departments. Kore.ai is best for companies that want to manage conversations, workflows, and integrations in one place across both external and internal use cases.

Key features

- Multi-use case automation: Build assistants for customer support, HR, IT help desks, and more within a single platform

- Advanced conversation design: Create structured and multi-step interactions with strong control over dialog flows

- Enterprise integrations: Connect with CRM systems, contact center platforms, and internal tools to trigger actions and retrieve data

- Omnichannel deployment: Deploy assistants across web, mobile, messaging apps, and voice channels

- Analytics and optimization: Track performance, user behavior, and conversation outcomes to improve experiences over time

Kore.ai can be complex to configure, especially when managing multiple use cases across departments. The platform often requires technical expertise or partner support to fully implement and maintain. Its pricing and feature set are geared toward large enterprises, which makes it less practical for smaller teams or companies with more focused needs.

3. NiCE Cognigy – best for enterprises automating complex customer service workflows

NiCE Cognigy is a conversational AI platform built for enterprises that want to automate customer interactions across voice and messaging channels.

It is a common choice in industries like telecom, insurance, banking, and travel, where support teams handle large volumes of repetitive tasks and need reliable self-service options. Cognigy focuses on helping organizations scale support operations while keeping control over how conversations and workflows are managed.

Key features

- Automation of repetitive tasks: Handle common support requests automatically, reducing the load on human agents

- Generative AI capabilities: Use generative AI to create more natural responses and adapt to different customer inputs

- Self-service experiences: Build self-service flows that resolve issues without agent involvement

- Workflow orchestration: Automate complex workflows across systems, teams, and channels

- Enterprise scalability: Scale support operations across regions, languages, and large customer bases

Cognigy can require significant setup and planning before it delivers value, especially for teams that want to automate complex workflows across multiple systems. It often depends on technical resources or partners for implementation, which can slow down the time to launch. Pricing is also positioned for enterprise use, making it less suitable for smaller teams or companies with simpler support needs.

4. Google DialogFlow CX – best for teams building complex, structured conversational flows

Google Dialogflow CX is a platform for building conversational AI bots with a strong focus on structured conversation design and flow control.

It’s popular in industries like telecom, finance, travel, and utilities that need reliable, repeatable interactions across support and service channels. Dialogflow works for teams that want to design consistent support experiences with clear logic and control over how conversations progress.

Top features

- Visual conversation builder: Design complex conversational flows using a state-based interface for better control

- AI-powered automation: Automate customer interactions while maintaining structured, predictable outcomes

- Conversational AI bots: Build bots that handle multi-step interactions across support and service use cases

- Omnichannel deployment: Deploy bots across web, mobile, messaging platforms, and voice interfaces

- Testing and versioning tools: Manage updates, test flows, and maintain consistent support across releases

Dialogflow CX can feel rigid compared to more flexible platforms, especially when handling less predictable conversations. It often requires technical knowledge to fully configure and maintain, particularly for integrations and advanced use cases. Costs can also scale quickly depending on usage, which may be a concern for teams with high interaction volumes.

5. Amazon Lex – best for companies already using AWS to build custom chat and voice assistants

Amazon Lex is a developer-focused service from AWS that lets teams build chat and voice interfaces using conversational AI.

Lex is popular with enterprises in industries like e-commerce, banking, travel, and telecom that need flexible control over how bots are built and deployed. It’s built for organizations with in house development teams that want to create custom solutions with seamless integration into existing systems.

Top features

- Conversational AI engine: Build chat and voice bots that understand intent and manage multi-step conversations

- Multilingual support: Create bots that can handle interactions in multiple languages for global customer bases

- Seamless integration with AWS: Connect with services like Lambda, S3, and DynamoDB to power backend workflows

- Voice and chat capabilities: Support both text based and voice interactions within the same platform

- Scalable infrastructure: Handle large volumes of interactions with AWS backed reliability and performance

Amazon Lex requires technical expertise to build and maintain, which makes it less accessible for non-technical teams. It does not provide a ready-to-use contact center experience out of the box, so companies need to build additional layers for routing, reporting, and agent workflows. Costs can also become difficult to predict as usage grows, especially for high-volume deployments.

6. Sprinklr – best for global brands managing customer conversations across many channels

Sprinklr is a unified customer experience platform used by large enterprises to manage customer interactions across social media, messaging apps, live chat, and voice.

It is widely adopted in industries like retail, telecom, airlines, and financial services, where teams need to coordinate support, marketing, and engagement across many touchpoints. The platform is built for organizations that want to centralize customer experiences and handle complex workflows across departments.

Top features

- Omnichannel engagement: Manage conversations across social, messaging, chat, and voice from a single platform

- Virtual agents: Deploy virtual agents to handle common requests and route more complex issues to human teams

- Workflow automation: Build and manage complex workflows that connect support, marketing, and operations

- Unified customer data: Combine customer interactions and profiles to deliver more consistent customer experiences

- Enterprise governance controls: Role-based access, approval flows, and compliance tools for large teams

Sprinklr can feel overwhelming for teams that only need a focused chatbot or support solution. The interface and setup process can take time to learn, especially when configuring complex workflows across multiple departments. It is also priced for enterprise use, which makes it difficult to justify for smaller teams or companies with simpler customer support needs.

7. Genesys – best for large enterprises with complex contact center operations

Genesys is a contact center platform used by large organizations to manage customer interactions across voice, chat, email, and messaging. It is common in industries like telecom, banking, insurance, healthcare, and travel, where support teams handle high volumes of conversations across multiple regions. The platform is built for companies that need deep control over routing, reporting, and customer journeys.

Top features

- Omnichannel routing: Manage voice, chat, email, and messaging interactions in one system with consistent routing logic

- Advanced call center infrastructure: Built-in tools for IVR, workforce management, and queue optimization at scale

- Journey orchestration: Map and control customer journeys across multiple touchpoints and teams

- Enterprise analytics and reporting: Detailed dashboards for tracking performance, agent productivity, and customer outcomes

- Global scalability: Supports large distributed teams with localization, regional compliance, and high availability

Genesys can be difficult to set up and maintain, especially for teams without dedicated technical resources. Implementation often requires external consultants or long onboarding cycles. Pricing is also on the higher end, which makes it a poor fit for smaller companies or teams that do not need full contact center infrastructure.

Get scalable support with AI agents built for enterprise

Enterprise AI chatbots are no longer optional for large organizations. They are becoming a core part of how companies handle customer interactions, support internal teams, and manage growing volumes of conversations across channels.

The right platform depends on what you actually need. Some tools focus on building custom bots from scratch, others specialize in contact center operations, and a few aim to connect everything into one system. The key is finding a solution that fits your workflows, integrates with your existing tools, and can handle real-world complexity without breaking under pressure.

If your goal is to deliver secure, high-quality interactions at scale, you need more than just automation. You need a platform that balances control, flexibility, and realistic conversations.

You need Quiq.

It gives enterprises the ability to automate support while keeping conversations natural, connected to backend systems, and aligned with how real support teams work.

Book a demo with Quiq to see how you can scale support without losing control over the customer experience.

FAQs

What is the difference between basic automation and an enterprise AI chatbot?

Basic automation usually follows predefined rules and scripts, which works for simple tasks but breaks down with more complex requests. Enterprise AI chatbots use artificial intelligence to understand intent, maintain conversation context, and handle more advanced interactions across systems and channels.

Can enterprise AI chatbots deliver personalized responses?

Yes, enterprise chatbots can deliver personalized responses by pulling data from CRM systems, support tools, and other backend sources. This allows them to tailor replies based on user history, preferences, and previous interactions, rather than giving generic answers.

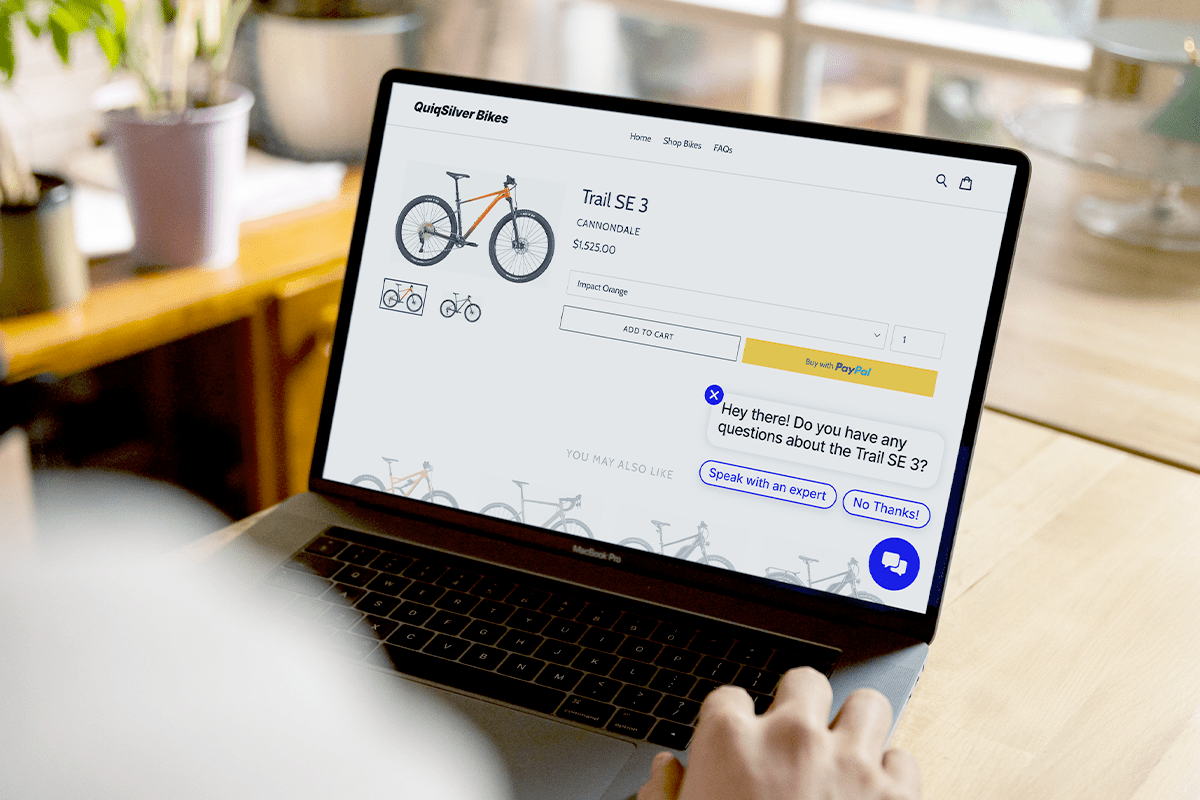

How do enterprise chatbots help website visitors?

They guide website visitors in real time by answering questions, recommending products or services, and helping them complete actions like booking or purchasing. This reduces friction and ensures visitors get accurate answers without needing to wait for a human agent.

How important is conversation context in enterprise AI chatbots?

Conversation context is critical. Enterprise chatbots track previous messages, user data, and intent throughout the interaction, which helps them respond more accurately and avoid repetitive or irrelevant answers. This is especially important for longer or more complex conversations.

Why are enterprise AI chatbots becoming essential for modern enterprises?

Modern enterprises deal with large volumes of customer and employee interactions across multiple channels. AI chatbots powered by artificial intelligence help manage this scale, provide accurate answers, and support teams by handling repetitive requests while still allowing human agents to step in when needed.