Key Takeaways

- Voicebot conversational AI uses speech recognition, natural language processing, and text-to-speech to enable natural phone interactions that understand intent and handle interruptions, replacing rigid “press 1 for billing” menu systems.

- Enterprise voicebots maintain continuous context across voice, chat, and SMS channels, allowing customers to switch between touchpoints without repeating information or losing conversation history.

- Companies like Roku achieve 48% containment rates using voicebots (or Voice AI Agents as we call them) for technical troubleshooting, demonstrating measurable ROI through reduced cost per contact and 24/7 availability without additional staffing.

- Modern voicebot platforms provide decision transparency and audit trails for enterprise compliance while seamlessly escalating complex or emotional conversations to human agents with full context transfer.

Voice AI Agents or voicebot conversational AI is technology that enables natural, human-like phone interactions using speech recognition, natural language processing, and text-to-speech—replacing the rigid “press 1 for billing” menus that have frustrated callers for decades. Unlike traditional IVR systems, voicebots understand intent, handle complex multi-turn conversations, and respond to interruptions the way a human would.

This guide covers how the technology works, the features that matter for enterprise deployment, and practical use cases from companies already seeing results.

What is voicebot conversational AI vs. legacy IVR systems?

A voicebot conversational AI is technology that uses Automatic Speech Recognition (ASR), Natural Language Processing (NLP), and text-to-speech to enable natural, human-like conversations.

At Quiq, we call this Voice AI Agents.

Unlike traditional IVR systems that force you through “press 1 for billing” menus, conversational AI voicebots support free-form speech, understand intent through natural language understanding, and handle complex tasks without forcing callers through rigid menu trees. Interactive voice response systems rely on keypad inputs and pre-recorded prompts; conversational AI voice technology enables real time interactions that feel genuinely human.

AI voice assistants and voice agents built on generative AI go further still, enabling response generation that adapts dynamically to each caller.

Voice AI Agents understand intent, handle complex queries, and can be interrupted mid-sentence. That last part matters more than you’d think—it’s what makes the conversation feel real.

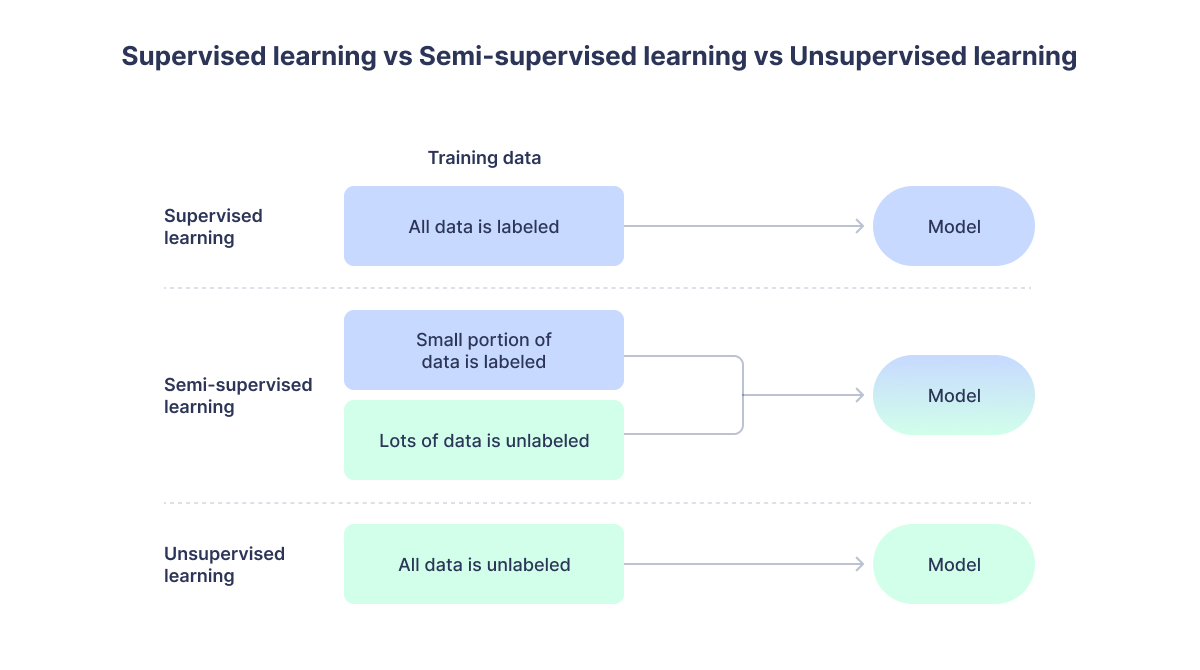

The difference between legacy IVR systems and voicebot conversational AI comes down to flexibility:

Traditional systems operate on pre-recorded messages and keypad inputs, offering a fixed set of responses from a pre-programmed menu. Voicebots, on the other hand, understand free-form natural speech, adapt to context, and resolve issues dynamically.

| Traditional IVR | Voicebot Conversational AI | |

|---|---|---|

| Input method | Touch-tone or simple voice commands | Natural speech in any phrasing |

| Conversation flow | Rigid, menu-based | Dynamic, context-aware |

| Complex queries | Limited or impossible | Handles multi-turn conversations |

| Interruptions | Not supported | Responds naturally |

How voicebot conversational AI technology works

Each component in the technology stack plays a specific role. When you understand how the pieces fit together, it becomes clear why modern voice bots feel so different from the phone trees we’ve all learned to dread.

Speech recognition and voice-to-text

The process starts when a customer speaks. Automatic Speech Recognition, or ASR, converts spoken words into text. Modern ASR systems use deep learning models trained on millions of hours of human speech, so they handle accents, background noise, and varied speaking speeds far better than earlier versions did.

Natural language processing and intent detection

Once the system has text, NLP figures out what the caller actually wants. Natural language processing is what makes voice bot AI conversational rather than scripted—it interprets meaning, not just keywords.

If someone says “I can’t get my TV to connect” or “my streaming device won’t find my WiFi”, the system recognizes both as the same underlying issue.

Dialogue management and conversation flow

Here’s where things get interesting. The dialogue manager tracks context across the entire conversation and decides what to say next. With agentic AI approaches, the system handles multi-turn conversations without rigid scripts, adapting when customers change topics or add new information mid-conversation.

Speech synthesis and text-to-speech

After determining the response, text-to-speech (TTS) converts it back to natural-sounding audio.

Voice quality matters—a robotic-sounding response undermines the entire experience. Modern neural TTS systems produce speech with appropriate tone, pacing, and even emotional inflection, making real time voice interactions feel far more natural.

Machine learning and continuous improvement

AI voicebots learn from interactions over time. They identify patterns in successful resolutions, recognize new ways customers phrase requests, and gradually improve accuracy. Machine learning also helps the system adapt to evolving speech patterns and customer expectations.

Far from a “set it and forget it” technology, continuous optimization is built into how these systems grow.

Key features of AI voice bots

Not all voicebot platforms offer the same capabilities. When evaluating enterprise solutions, several features separate basic implementations from production-ready ones.

1. Continuous context across conversations

Customers shouldn’t repeat themselves when switching channels or returning later. The best platforms maintain one unbroken conversation—whether someone started on chat yesterday and calls today.

2. Omnichannel integration with chat and SMS

True omnichannel means more than supporting multiple channels. A customer can start troubleshooting on voice, receive a link via SMS during the same call without hanging up, and continue the conversation across channels without losing any context.

3. Decision transparency and configurable guardrails

Enterprises often ask: “How do I know what the AI is deciding?”

Platforms built for enterprise use show exactly how AI reached conclusions, with full audit trails for compliance and governance. You configure the guardrails, and the AI operates within them.

4. Seamless human agent handoff

When escalation happens, context transfers with the customer. They don’t start over. The agent sees the full conversation history, what the voicebot already tried, and why the escalation occurred.

Additional capabilities worth evaluating:

- Natural language understanding: Recognizes synonyms, slang, and varied phrasing.

- Knowledge base integration: Pulls answers from existing company documentation.

- Multilingual support: Handles multiple languages, sometimes switching mid-call.

- Analytics and reporting: Tracks containment rates, sentiment, and conversation outcomes.

Types of AI voicebots for business

Different business situations call for different voicebot configurations.

Inbound customer service voicebots

Inbound voicebots handle incoming calls for support, inquiries, and issue resolution. Typically the first point of contact, they resolve routine questions and gather information before escalating complex issues to live agents.

Outbound engagement voicebots

Outbound voicebots make proactive calls for appointment reminders, payment confirmations, surveys, and collections. Outbound calling campaigns that once required dedicated human resources can now run at scale through voice automation. These systems initiate contact rather than waiting for customers to call in.

Voice recognition chatbots with hybrid capabilities

Some systems work across both text and voice channels—what you might call a chatbot with voice. Voice recognition is central to this hybrid approach, which proves valuable when customers have strong channel preferences or switch between voice channels and digital channels frequently.

Benefits of conversational voice assistants for enterprise

The business case for voicebot conversational AI typically centers on measurable outcomes.

24/7 availability without added staffing costs

Customers get help at 2 AM without overnight shift scheduling. For global businesses, voice automation eliminates the complexity of follow-the-sun support models.

Reduced cost per contact

AI powered voice bots handle routine inquiries at a fraction of the cost of live agents.

When chat interactions increase while phone contacts drop, agents can handle multiple conversations simultaneously.

Customer satisfaction scores that actually improve

Faster resolution and no hold times improve CSAT and NPS. When customers don’t wait 20 minutes to ask a simple question, customer satisfaction naturally increases.

Personalized support—using account data to tailor responses—further raises the bar.

Scalability during peak volume periods

Holiday rushes, product launches, service outages—voicebots handle spikes in call volume without scrambling for temporary staff. A single system manages thousands of concurrent customer interactions.

Consistent brand experiences at scale

Every interaction follows your brand voice and standards. The AI doesn’t have bad days, doesn’t forget training, and doesn’t go off-script.

Increased agent productivity

When AI handles password resets and order status checks, human agents focus on complex issues that actually benefit from human judgment and empathy.

Conversational AI voice bot use cases in the call center

What specific tasks can a voicebot contact center handle? The list continues to expand, but several use cases have proven particularly effective.

Customer support and technical troubleshooting

Voicebots diagnose issues, walk through fixes, and resolve common customer queries. For example, Roku’s AI agent achieved a 48% containment rate on device troubleshooting—nearly half of inquiries needed no human involvement.

Account and billing inquiries

Balance checks, payment processing, plan changes. The voicebot securely prompts customers to authenticate, retrieves personalized account information, and tailors responses accordingly.

Appointment scheduling and confirmations

Appointment booking, rescheduling, and reminder calls are all well-suited to voice automation. Healthcare organizations use voicebots to manage appointment workflows that previously required dedicated staff.

Order status and management

Tracking, returns, cancellations. E-commerce companies handle order inquiries that once flooded call centers during peak seasons.

FAQ handling and self service options

Store hours, policies, product information—high-volume, low-complexity customer inquiries are ideal candidates for automation. Self service options powered by conversational AI voice bots let customers resolve issues on their own terms, without waiting for a human agent.

Lead qualification and intelligent call routing

Lead qualification is another area where voicebots add measurable value. Even when the voicebot can’t fully resolve an issue, it gathers context that makes the human handoff more efficient—and ensures the right agent receives the right customer.

How voice automation improves customer satisfaction

Why do customers prefer well-designed AI Voice Agents over traditional phone systems? The answer comes down to friction—or rather, the lack of it.

No hold times. No repeating information. No navigating seven menu levels to reach a human.

Good voicebots know when to escalate, so customers aren’t trapped in automation loops. Personalization—using account data to make customer conversations feel relevant—changes the experience from generic to genuinely helpful. Providing customers with natural conversations instead of scripted dead ends is what separates advanced AI from legacy IVR.

Challenges of AI voicebots and how to address them

Honest assessment of limitations builds trust. Here’s what enterprises typically encounter.

Handling complex or emotional conversations

Some issues require human empathy. Good platforms detect escalation triggers—frustration in voice tone, repeated failed attempts, explicit requests for a human—and route accordingly.

Accuracy in noisy environments

Background noise affects speech recognition. Adaptive audio processing and noise cancellation help, though accuracy in truly chaotic environments remains a challenge.

Privacy and data security requirements

Voice data is sensitive. Compliance with GDPR, PCI, and industry-specific regulations requires encryption, real-time data handling policies, and clear consent mechanisms.

Integration with legacy systems

Many enterprises run older technology. API flexibility and pre-built connectors for common systems reduce integration complexity, though some custom work is often unavoidable.

How enterprises should evaluate a conversational AI platform for voice

Evaluation criteria matter more than vendor marketing.

Transparency and governance capabilities

Can you see how the AI makes decisions? Are there audit trails? For regulated industries, this isn’t optional.

True omnichannel vs multi-channel architecture

Does context persist across channels, or do customers start over? The difference defines the customer experience.

Brand voice customization

Can you operationalize your tone, terminology, and standards—not generic scripts?

Integration flexibility with existing systems

Does the platform connect to your CRM, knowledge base, and contact center infrastructure?

Vendor partnership vs vendor relationship

Will they act as a strategic partner or just sell software? The implementation and optimization phases reveal the answer.

Getting started with voicebot conversational AI for customer care

For enterprises ready to implement voice AI bots, a practical sequence helps avoid common pitfalls.

- Define your primary use cases. Start with high-volume, routine inquiries where automation has clear ROI. Device troubleshooting, account inquiries, and appointment scheduling are common starting points.

- Audit your current customer journey. Map where customers experience friction or long wait times. Pain points indicate where voicebots can have immediate impact.

- Select a platform with enterprise guardrails. Prioritize transparency, governance, and compliance capabilities. The ability to see and control AI decisions matters more than flashy demos.

- Pilot with measurable success criteria. Define containment rate, CSAT, and cost-per-contact targets before launch. A/B testing against existing processes provides clear comparison.

- Scale with continuous optimization. Use analytics to refine and expand to additional use cases.

Why your voice AI should connect to the entire customer journey

Deploying automated systems with voice AI in isolation creates fragmented experiences. A customer who troubleshoots via voicebot, then chats with an agent, then calls back shouldn’t feel like they’re starting from scratch each time.

The best conversational AI voice bot maintains one unbroken conversation across voice, chat, SMS, and human handoffs.

Context, history, and nuance carry through—whether the interaction happens on smart speakers, phone lines, or digital channels. Conversational AI systems built with this continuity in mind are what truly assist customers and boost customer engagement rather than just automate touchpoints.

Ready to see how it works? Book a demo.

FAQs about conversational AI use cases

What is the difference between a voicebot and a chatbot with voice?

A chatbot with voice adds speech input/output to a text-based bot. A true voicebot is purpose-built for voice interactions with optimized dialogue management and audio processing for phone conversations.

Can AI voicebots handle multiple languages in the same conversation?

Many enterprise platforms support multilingual support and can detect language switches mid-conversation, though accuracy varies by platform and language pair.

How do voicebots integrate with existing contact center software?

Most platforms offer APIs and pre-built connectors for common contact center systems, CRMs, and telephony infrastructure. Integration complexity depends on your existing tech stack.

What is the typical ROI timeline for enterprise voicebot implementation?

Most enterprises see measurable cost savings and efficiency gains within the first few months of deployment, with ROI accelerating as the AI learns and expands to additional use cases.

What happens when conversational AI cannot resolve a customer’s issue?

Well-designed systems recognize their limitations and hand off to human agents with full conversation context, so customers don’t have to repeat themselves.

Can customers still reach a human agent when using a voicebot?

Yes—well-designed platforms with artificial intelligence detect when human escalation is appropriate and transfer the call with full context so customers don’t repeat themselves.