Machine learning is hard work. Sure, it only takes a few minutes to knock out a simple tutorial where you’re training an image classifier on the famous iris dataset, but training a big model to do something truly valuable – like interacting with customers over a chat interface – is a much greater challenge.

Transfer learning offers one possible solution to this problem. By making it possible to train a model in one domain and reuse it in another, transfer learning can reduce demands on your engineering team by a substantial amount.

Today, we’re going to get into transfer learning, defining what it is, how it works, where it can be applied, and the advantages it offers.

Let’s get going!

What is Transfer Learning in AI?

In the abstract, transfer learning refers to any situation in which knowledge from one task, problem, or domain is transferred to another. If you learn how to play the guitar well and then successfully use those same skills to pick up a mandolin, that’s an example of transfer learning.

Speaking specifically about machine learning and artificial intelligence, the idea is very similar. Transfer learning is when you pre-train a model on one task or dataset and then figure out a way to reuse it for another (we’ll talk about methods later).

If you train an image model, for example, it will tend to learn certain low-level features (like curves, edges, and lines) that show up in pretty much all images. This means you could fine-tune the pre-trained model to do something more specialized, like recognizing faces.

Why Transfer Learning is Important in Deep Learning Models

Building a deep neural network requires serious expertise, especially if you’re doing something truly novel or untried.

Transfer learning, while far from trivial, is simply not as taxing. GPT-4 is the kind of project that could only have been tackled by some of Earth’s best engineers, but setting up a fine-tuning pipeline to get it to do good sentiment analysis is a much simpler job.

By lowering the barrier to entry, transfer learning brings advanced AI into reach for a much broader swath of people. For this reason alone, it’s an important development.

Transfer Learning vs. Fine-Tuning

And speaking of fine-tuning, it’s natural to wonder how it’s different from transfer learning.

The simple answer is that fine-tuning is a kind of transfer learning. Transfer learning is a broader concept, and there are other ways to approach it besides fine-tuning.

What are the 5 Types of Transfer Learning?

Broadly speaking, there are five major types of transfer learning, which we’ll discuss in the following sections.

Domain Adaptation

Under the hood, most modern machine learning is really just an application of statistics to particular datasets.

The distribution of the data a particular model sees, therefore, matters a lot. Domain adaptation refers to a family of transfer learning techniques in which a model is (hopefully) trained such that it’s able to handle a shift in distributions from one domain to another (see section 5 of this paper for more technical details).

Domain Confusion

Earlier, we referenced the fact that the layers of a neural network can learn representations of particular features – one layer might be good at detecting curves in images, for example.

It’s possible to structure our training such that a model learns more domain invariant features, i.e. features that are likely to show up across multiple domains of interest. This is known as domain confusion because, in effect, we’re making the domains as similar as possible.

Multitask Learning

Multitask learning is arguably not even a type of transfer learning, but it came up repeatedly in our research, so we’re adding a section about it here.

Multitask learning is what it sounds like; rather than simply training a model on a single task (i.e. detecting humans in images), you attempt to train it to do several things at once.

The debate about whether multitask learning is really transfer learning stems from the fact that transfer learning generally revolves around adapting a pre-trained model to a new task, rather than having it learn to do more than one thing at a time.

One-Shot Learning

One thing that distinguishes machine learning from human learning is that the former requires much more data. A human child will probably only need to see two or three apples before they learn to tell apples from oranges, but an ML model might need to see thousands of examples of each.

But what if that weren’t necessary? The field of one-shot learning addresses itself to the task of learning e.g. object categories from either one example or a small number of them. This idea was pioneered in “One-Shot Learning of Object Categories”, a watershed paper co-authored by Fei-Fei Li and her collaborators. Their Bayesian one-shot learner was able to “…to incorporate prior knowledge of the object world into the learning scheme”, and it outperformed a variety of other models in object recognition tasks.

Zero-Shot Learning

Of course, there might be other tasks (like translating a rare or endangered language), for which it is effectively impossible to have any labeled data for a model to train on. In such a case, you’d want to use zero-shot learning, which is a type of transfer learning.

With zero-shot learning, the basic idea is to learn features in one data set (like images of cats) that allow successful performance on a different data set (like images of dogs). Humans have little problem with this, because we’re able to rapidly learn similarities between types of entities. We can see that dogs and cats both have tails, both have fur, etc. Machines can perform the same feat if the data is structured correctly.

How Does Transfer Learning Work?

There are a few different ways you can go about utilizing transfer learning processes in your own projects.

Perhaps the most basic is to use a good pre-trained model off the shelf as a feature extractor. This would mean keeping the pre-trained model in place, but then replacing its final layer with a layer custom-built for your purposes. You could take the famous AlexNet image classifier, remove its last classification layer, and replace it with your own, for example.

Or, you could fine-tune the pre-trained model instead. This is a more involved engineering task and requires that the pre-trained model be modified internally to be better suited to a narrower application. This will often mean that you have to freeze certain layers in your model so that the weights don’t change, while simultaneously allowing the weights in other layers to change.

What are the Applications of Transfer Learning?

As machine learning and deep learning have grown in importance, so too has transfer learning become more crucial. It now shows up in a variety of different industries. The following are some high-level indications of where you might see transfer learning being applied.

Speech recognition across languages: Teaching machines to recognize and process spoken language is an important area of AI research and will be of special interest to those who operate contact centers. Transfer learning can be used to take a model trained in a language like French and repurpose it for Spanish.

Training general-purpose game engines: If you’ve spent any time playing games like chess or go, you know that they’re fairly different. But, at a high enough level of abstraction, they still share many features in common. That’s why transfer learning can be used to train up a model on one game and, under certain conditions, use it in another.

Object recognition and segmentation: Our Jetsons-like future will take a lot longer to get here if our robots can’t learn to distinguish between basic objects. This is why object recognition and object segmentation are both such important areas of research. Transfer learning is one way of speeding up this process. If models can learn to recognize dogs and then quickly be re-purposed for recognizing muffins, then we’ll soon be able to outsource both pet care and cooking breakfast.

Applying Natural Language Processing: For a long time, computer vision was the major use case of high-end, high-performance AI. But with the release of ChatGPT and other large language models, NLP has taken center stage. Because much of the modern NLP pipeline involves word vector embeddings, it’s often possible to use a baseline, pre-trained NLP model in applications like topic modeling, document classification, or spicing up your chatbot so it doesn’t sound so much like a machine.

What are the Benefits of Transfer Learning?

Transfer learning has become so popular precisely because it offers so many advantages.

For one thing, it can dramatically reduce the amount of time it takes to train a new model. Because you’re using a pre-trained model as the foundation for a new, task-specific model, far fewer engineering hours have to be spent to get good results.

There are also a variety of situations in which transfer learning can actually improve performance. If you’re using a good pre-trained model that was trained on a general enough dataset, many of the features it learned will carry over to the new task.

This is especially true if you’re working in a domain where there is relatively little data to work with. It might simply not be possible to train a big, cutting-edge model on a limited dataset, but it will often be possible to use a pre-trained model that is fine-tuned on that limited dataset.

What’s more, transfer learning can work to prevent the ever-present problem of overfitting. Overfitting has several definitions depending on what resource you consult, but a common way of thinking about it is when the model is complex enough relative to the data that it begins learning noise instead of just signal.

That means that it may do spectacularly well in training only to generalize poorly when it’s shown fresh data. Transfer learning doesn’t completely rule out this possibility, but it makes it less likely to happen.

Transfer learning also has the advantage of being quite flexible. You can use transfer learning for everything from computer vision to natural language processing, and many domains besides.

Relatedly, transfer learning makes it possible for your model to expand into new frontiers. When done correctly, a pre-trained model can be deployed to solve an entirely new problem, even when the underlying data is very different from what it was shown before.

When To Use Transfer Learning

The list of benefits we just enumerated also offers a clue as to when it makes sense to use transfer learning.

Basically, you should consider using transfer learning whenever you have limited data, limited computing resources, or limited engineering brain cycles you can throw at a problem. This will often wind up being the case, so whenever you’re setting your sights on a new goal, it can make sense to spend some time seeing if you can’t get there more quickly by simply using transfer learning instead of training a bespoke model from scratch.

Check out the second video in Quiq’s LLM Intuitions series—created by our Head of AI, Kyle McIntyre—to learn about one of the oldest forms of transfer learning: Word embeddings.

Transfer Learning and You

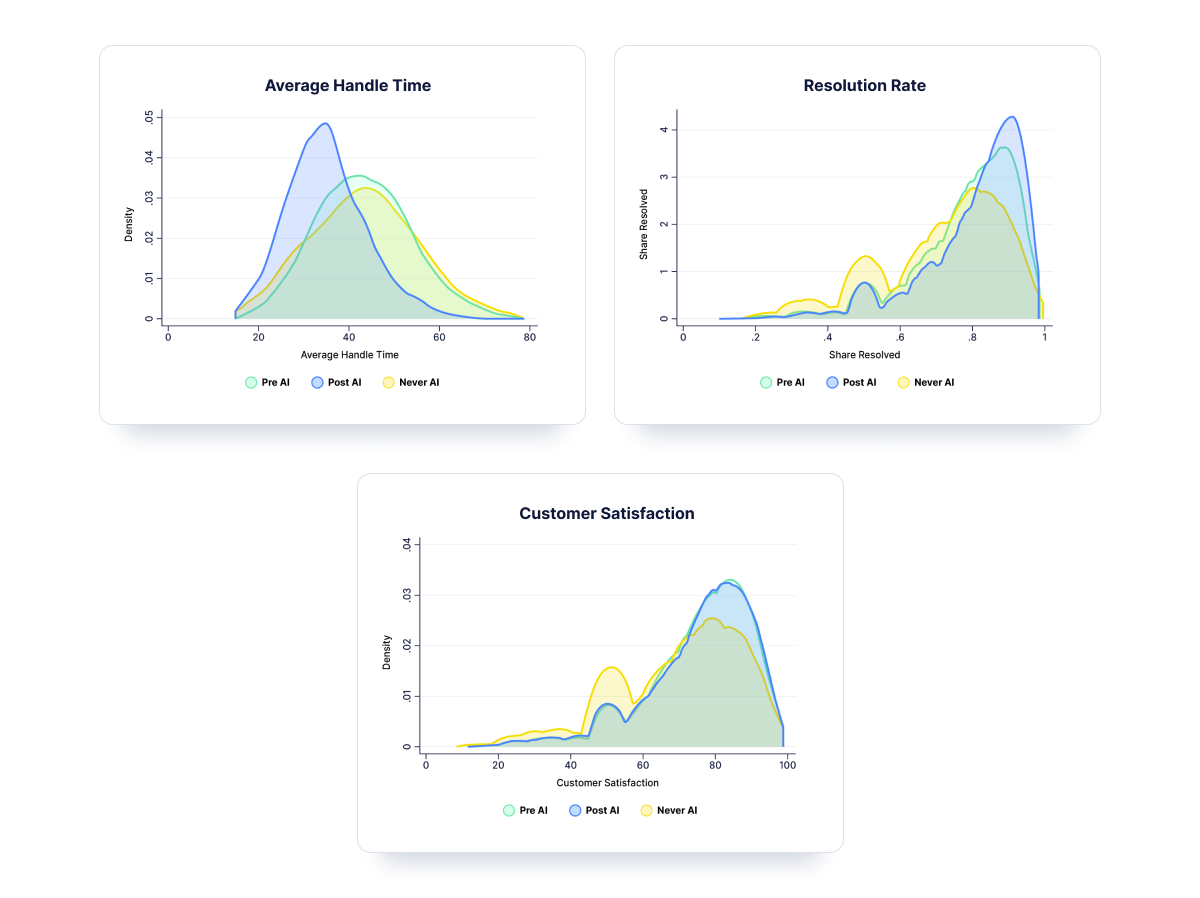

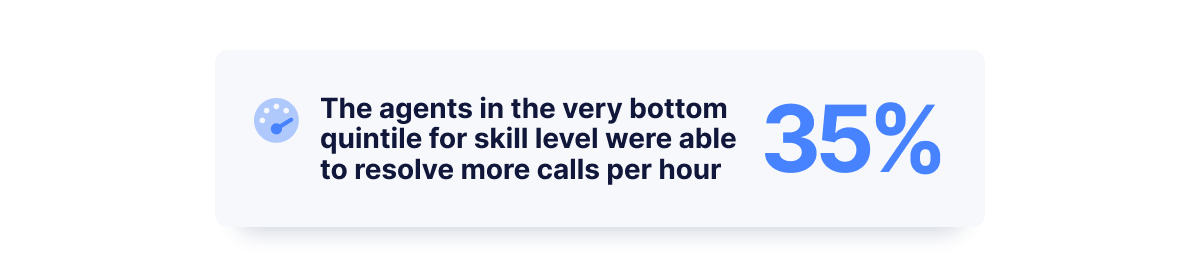

In the contact center space, we understand how difficult it can be to effectively apply new technologies to solve our problems. It’s one thing to put together a model for a school project, and quite another to have it tactfully respond to customers who might be frustrated or confused.

Transfer learning is one way that you can get more bang for your engineering buck. By training a model on one task or dataset and using it on another, you can reduce your technical budget while still getting great results.

You could also just rely on us to transfer our decades of learning on your behalf (see what we did there). We’ve built an industry-leading conversational AI chat platform that is changing the game in contact centers. Reach out today to see how Quiq can help you leverage the latest advances in AI, without the hassle.