Over the past few months, we’ve had a lot to say about artificial intelligence, its new frontiers, and the ways in which it is changing the customer service industry.

A natural extension of this analysis is looking at the use of AI in retail. That is our mission today. We’ll look at how techniques like natural language processing and computer vision will impact retail, along with some of the benefits and challenges of this approach.

Let’s get going!

How is AI Used in Retail?

AI is poised to change retail, as it is changing many other industries. In the sections that follow, we’ll talk through three primary AI technologies that are driving these changes, namely natural language processing, computer vision, and machine learning more broadly.

Natural Language Processing

Natural language processing (NLP) refers to a branch of machine learning that attempts to work with spoken or written language algorithmically. Together with computer vision, it is one of the best-researched and most successful attempts to advance AI since the field was founded some seven decades ago.

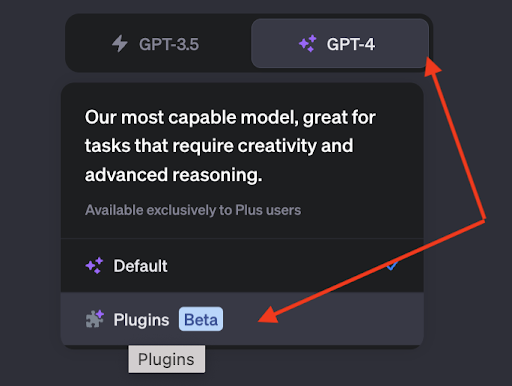

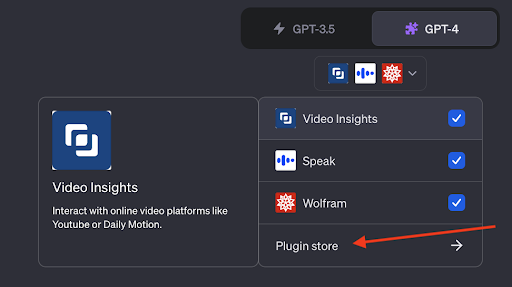

Of course, these days the main NLP applications everyone has heard of are large language models like ChatGPT. This is not the only way AI assistants will change retail, but it is a big one, so that’s where we’ll start.

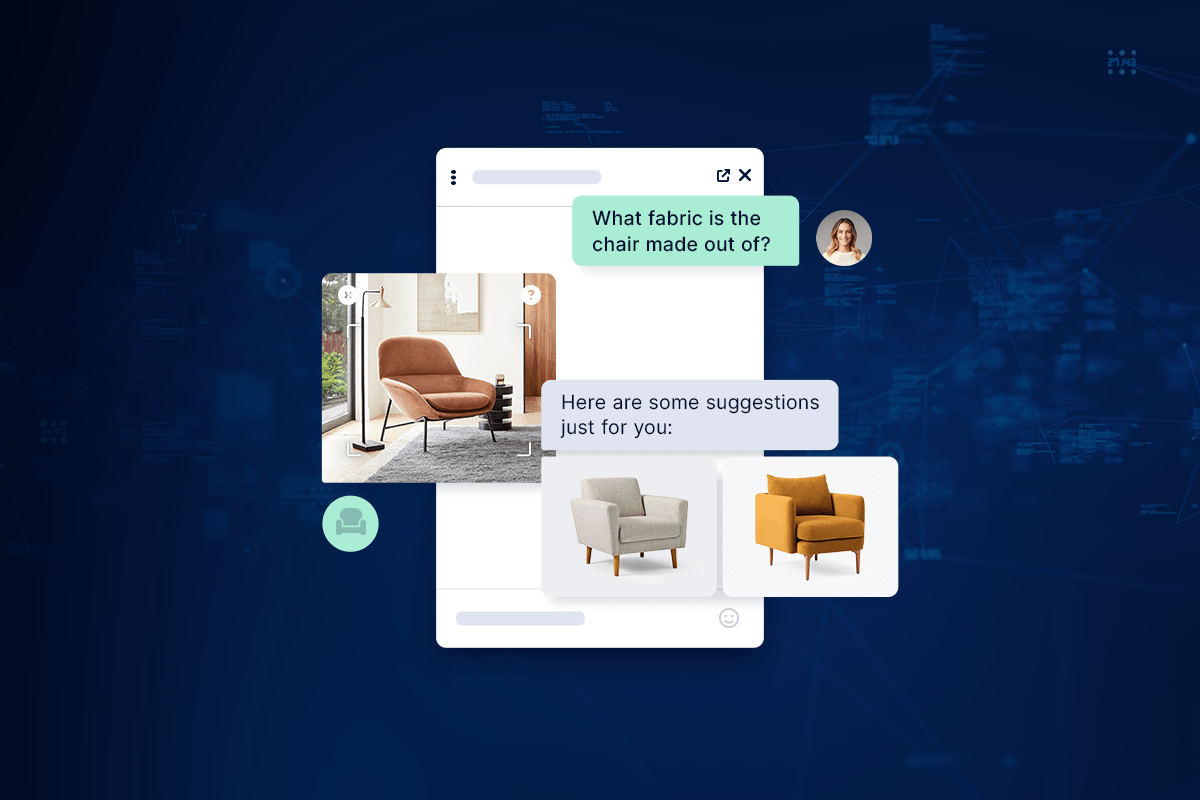

An obvious place to use LLMs in retail is with chatbots. There’s a lot of customer interaction that involves very specific questions that need to be handled by a human customer service agent, but a lot of it is fairly banal, consisting of things like “How do I return this item” or “Can you help me unlock my account.” For these sorts of issues, today’s chatbots are already powerful enough to help in most situations.

A related use case for AI in retail is asking questions about specific items. A customer might want to know what fabric an article of clothing is made out of or how it should be cleaned, for example. An out-of-the-box model like ChatGPT won’t be able to help much. but if you’ve used a service like Quiq’s conversational CX platform, it’s possible to finetune an LLM on your specific documentation. Such a model will be able to help customers find the answers they need.

These use cases are all centered around text-based interactions, but algorithms are getting better and better at both speech recognition and speech synthesis. You’ve no doubt had the distinct (dis)pleasure of interacting with an automated system that sounded very artificial and that lacked the flexibility actually to help you very much; but someday soon, you may not be able to tell from a short conversation whether you were talking to a human or a machine.

This may cause a certain amount of concern over technological unemployment. If chatbots and similar AI assistants are doing all this, what will be left for flesh-and-blood human workers? Frankly, it’s too early to say, but the evidence so far suggests that not only is AI not making us obsolete, it’s actually making workers more productive and less prone to burnout.

Computer Vision

Computer vision is the other major triumph of machine learning. CV algorithms have been created that can recognize faces, recognize thousands of different types of objects, and even help with steering autonomous vehicles.

How does any of this help with retail?

We already hinted at one use case in the previous paragraph, i.e. automatically identifying different items. This has major implications for inventory management, but when paired with technologies like virtual reality and augmented reality, it could completely transform the ways in which people shop.

Many platforms already offer the ability to see furniture and similar items in a customer’s actual living space, and there are efforts underway to build tools for automatically sizing them so they know exactly which clothes to try on.

CV is also making it easier to gather and analyze different metrics crucial to a retail enterprise’s success. Algorithms can watch customer foot traffic to identify potential hotspots, meaning that these businesses can figure out which items to offer more of and which to cut altogether.

Machine Learning

As we stated earlier, both natural language processing and computer vision are types of machine learning. We gave them their own sections because they’re so big and important, but they’re not the only ways in which machine learning will impact retail.

Another way is with increasingly personalized recommendations. If you’ve ever taken the advice of Netflix or Spotify as to what entertainment you should consume next then you’ve already made contact with a recommendation engine. But with more data and smarter algorithms, personalization will become much more, well, personalized.

In concrete terms, this means it will become easier and easier to analyze a customer’s past buying history to offer them tailor-made solutions to their problems. Retail is all about consumer satisfaction, so this is poised to be a major development.

Machine learning has long been used for inventory management, demand forecasting, etc., and the role it plays in these efforts will only grow with time. Having more data will mean being able to make more fine-grained predictions. You’ll be able to start printing Taylor Swift t-shirts and setting up targeted ads as soon as people in your area begin buying tickets to her show next month, for example.

Where are AI Assistants Used in Retail?

So far, we’ve spoken in broad terms about the ways in which AI assistants will be used in retail. In these sections, we’ll get more specific and discuss some of the particular locations where these assistants can be deployed.

In Kiosks

Many retail establishments already have kiosks in place that let you swap change for dollars or skip the trip to the DMV. With AI, these will become far more adaptable and useful, able to help customers with a greater variety of transactions.

In Retail Apps

Mobile applications are an obvious place to use recommendations or LLM-based chatbots to help make a sale or get customers what they need.

In Smart Speakers

You’ve probably heard of Alexa, a smart speaker able to play music for you or automate certain household tasks. Well, it isn’t hard to imagine their use in retail, especially as they get better. They’ll be able to help customers choose clothing, handle returns, or do any of a number of related tasks.

In Smart Mirrors

For more or less the same reason, AI-powered smart mirrors could have a major impact on retail. As computer vision improves it’ll be better able to suggest clothing that looks good on different heights and builds, for example.

What are the Benefits of Using AI in Retail?

The main reason that AI is being used more frequently in retail is that there are so many advantages to this approach. In the next few sections, we’ll talk about some of the specific benefits retail establishments can expect to enjoy from their use of AI.

Better Customer Experience and Engagement

These days, there are tons of ways to get access to the goods and services you need. What tends to separate one retail establishment from another is customer experience and customer engagement. AI can help with both.

We’ve already mentioned how much more personalized AI can make the customer experience, but you might also consider the impact of round-the-clock availability that AI makes possible.

Customer service agents will need to eat and sleep sometimes, but AI never will, which means that it’ll always be available to help a customer solve their problems.

More Selling Opportunities

Cross-selling and upselling are both terms that are probably familiar to you, and they represent substantial opportunities for retail outfits to boost their revenue.

With personalized recommendations, sentiment analysis, and similar machine-learning techniques, it will become much faster and easier to identify additional items that a customer might be interested in.

If a customer has already bought Taylor Swift tickets and a t-shirt, for example, perhaps they’d also like a fetching hat that goes along with their outfit. And if you’ve installed the smart mirrors we talked about earlier, AI will even be able to help them find the right size.

Leaner, More Efficient Operations

Inventory management is a never-ending concern in retail. It’s also one place where algorithmic solutions have been used for a long time. We think this trend will only continue, with operations becoming leaner and more responsive to changing market conditions.

All of this ultimately hinges on the use of AI. Better algorithms and more comprehensive data will make it possible to predict what people will want and when, meaning you don’t have to sit on inventory you don’t need and are less likely to run out of anything that’s selling well.

What are the Challenges of Using AI in Retail?

That being said, there are many challenges to using Artificial Intelligence in retail. We’ll cover a few of these now so you can decide how much effort you want to put into using AI.

AI Can Still Be Difficult to Use

To be sure, firing up ChatGPT and asking it to recommend an outfit for a concert doesn’t take very long. But this is a far cry from implementing a full-bore AI solution into your website or mobile applications. Serious technical expertise is required to train, finetune, deploy, and monitor advanced AI, whether that’s an LLM, a computer-vision system, or anything else, and you’ll need to decide whether you think you’ll get enough return to justify the investment.

Expense

And speaking of investment, it remains pretty expensive to utilize AI at any non-trivial scale. If you decide you want to hire an in-house engineering team to build a bespoke model, you’ll have to have a substantial budget to pay for the training and the engineer’s salaries. These salaries are still something you’ll have to account for even if you choose to build on top of an existing solution, because finetuning a model is far from easy.

One solution is to utilize an offering like Quiq. We have already created the custom infrastructure required to utilize AI in a retail setting, meaning you wouldn’t need a serious engineering force to get going with AI.

Bias, Abuse, and Toxicity

A perennial concern with using AI is that a model will generate output that is insulting, harmful, or biased in some way. For obvious reasons this is bad for retail establishments, so you’ll want to make sure that you both carefully finetune this behavior out of your models and continually monitor them in case their behavior changes in the future. Quiq also eliminates this risk.

AI and the Future of Retail

Artificial intelligence has long been expected to change many aspects of our lives, and in the past few years, it has begun delivering on that promise. From ultra-precise recommendations to full-fledged chatbots that help resolve complex issues, retail stands to benefit greatly from this ongoing revolution.

If you want to get in on the action but don’t know where to start, set up a time to check out the Quiq platform. We make it easy to utilize both customer-facing and agent-facing solutions, so you can build an AI-positive business without worrying about the engineering.