Now that we’ve all seen what ChatGPT can do, it’s natural to begin casting about for ways to put it to work. An obvious place where a generative AI language model can be used is in contact centers, which involve a great deal of text-based tasks like answering customer questions and resolving their issues.

But is ChatGPT ready for the on-the-ground realities of contact centers? What if it responds inappropriately, abuses a customer, or provides inaccurate information?

We at Quiq pride ourselves on being experts in customer experience and customer service, and we’ve been watching recent developments in generative AI for some time. This piece presents our conclusions about what ChatGPT is, the ways in which ChatGPT can be used for customer service, and the techniques that exist to optimize it for this domain.

What is ChatGPT?

ChatGPT is an application built on top of GPT-4, a large language model. Large language models like GTP-4 are trained on huge amounts of textual data, and they gradually learn the statistical patterns present in that data well enough to output their own, new text.

How does this training work? Well, when you hear a sentence like “I’m going to the store to pick up some _____”, you know that the final word is something like “milk”, “bread”, or “groceries”, and probably not “sawdust” or “livestock”.

This is because you’ve been using English for a long time, you’re familiar with what happens at a grocery store, and you have a sense of how a person is likely to describe their exciting adventures there (nothing gets our motor running like picking out the really good avocados).

GPT-4, of course, has none of this context, but if you show it enough examples, it can learn to imitate natural language quite well. It will see the first few sentences of a paragraph and try to predict what the final sentence is.

At first, its answers are terrible, but with each training run its internal parameters are updated, and it gradually gets better. If you do this for long enough, you get something that can write its own emails, blog posts, research reports, book summaries, poems, and codebases.

Can ChatGPT be used for customer service?

Short answer: not on its own.

While tools like ChatGPT can support customer service workflows, relying on them as a standalone solution introduces serious risks. Here’s why.

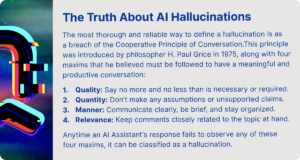

It makes things up (and sounds confident doing it)

One of the biggest issues is hallucination, where the model generates incorrect answers that sound completely believable. In customer service, this can mean:

- Invented refund policies

- Incorrect pricing or product details

- Fake troubleshooting steps

These errors are not rare edge cases that happen every now and then in customer inquiries. They happen because the model predicts likely words, not verified facts.

Even worse, it often delivers these answers with high confidence, which makes them harder to catch and more damaging to trust.

It doesn’t know your business

ChatGPT is trained on general internet data, not your:

- Internal policies and knowledge base data

- Product catalog

- Customer history and relevant customer queries

- Real-time updates

That means it can’t reliably answer company-specific questions unless heavily customized. Out of the box, it simply lacks context, which leads to vague or irrelevant responses and it cannot address customers’ concerns well.

For customer support, that’s a deal breaker.

It can’t guarantee accuracy or compliance

In industries like finance, healthcare, or legal services, even small mistakes carry real consequences.

- Companies can be legally liable for incorrect chatbot responses

- AI errors from automated responses can lead to compliance violations or financial loss

- Wrong advice can damage customer relationships permanently

Unlike human agents, ChatGPT has no built-in accountability or understanding of risk. If you want accurate responses that actually improve customer satisfaction, relying on ChatGPT alone is dangerous.

It raises data privacy concerns when handling customer inquiries

Using ChatGPT for support often involves sharing customer data. That introduces risk:

- Conversations may be stored or used for training

- Sensitive information could be exposed

- Compliance with regulations like GDPR becomes harder as data gets passed through multiple systems

This is especially problematic for businesses handling personal or financial data.

It lacks true understanding and judgment, ultimately affecting customer experience

ChatGPT can mimic conversation, but it does not:

- Understand customer intent in complex customer issues

- Handle emotional or sensitive situations well and give personalized responses

- Apply judgment in edge cases

It can escalate frustration instead of resolving it, especially when a customer needs nuance or empathy beyond the average call center script.

It still needs human customer service agents to work properly

Even in advanced setups, companies don’t rely on ChatGPT alone. They use:

- Human review and escalation paths

- Structured knowledge bases

- Guardrails and validation layers

Without these, results become inconsistent and risky. In fact, most real-world deployments combine AI with human oversight because fully automated support is still unreliable.

So, where does ChatGPT for customer service actually fit?

ChatGPT works best as a supporting tool, not a replacement. For example:

- Drafting replies for agents

- Summarizing tickets

- Assisting with internal documentation such as knowledge base articles

But when it comes to customer-facing support, especially in high-stakes scenarios, it still falls short without a proper system around it.

Why purpose-built customer service platforms make more sense

If ChatGPT alone is not reliable for handling real customer interactions, the next step is not to abandon AI. It is to use it inside systems that are built for the realities of the customer service space.

This is where platforms like Quiq come in. They take large language models and connect them to real workflows, real data, and real oversight. Instead of acting like a generic customer service chatbot, the AI becomes part of a structured system that customer service leaders can actually trust in production environments.

Built for real customer service interactions

Standalone tools do not reflect how support actually works. Real teams deal with queues, SLAs, escalations, and multiple channels at once.

Purpose-built platforms are designed around these realities and key challenges:

- Intelligent routing ensures customer interactions go to the right customer support agents based on intent, priority, or account value

- Omnichannel support brings chat, voice, SMS, and social messaging into one place, so customer service agents are not switching tools

- Context is preserved across conversations, giving every customer service representative full visibility into previous interactions, orders, and issues

This structure removes friction from daily operations. Instead of reacting to messages one by one, customer service teams can manage volume in a controlled, predictable way. Once you’re past the initial setup, you can easily generate responses that are accurate, helpful and empathetic.

It also improves consistency. When workflows are standardized, every customer interaction follows a defined path, which reduces errors and helps deliver exceptional customer service at scale.

Grounded in your data, not generic knowledge

One of the biggest gaps with a standalone customer service chatbot is that it relies on general knowledge instead of your business context.

Purpose-built platforms solve this by connecting AI directly to:

- Internal knowledge bases and help centers

- CRM systems with detailed customer history

- Order management and billing systems

- Product documentation and release notes

This changes how responses are generated. Instead of guessing, the AI pulls from verified sources tied to your business.

For example, when handling customer interactions about billing issues or subscription changes, the system can reference real account data instead of producing a generic answer. That leads to fewer mistakes and faster resolutions.

For customer service leaders, this is critical. Accurate answers directly impact customer satisfaction, and grounding AI in real data is what makes that possible.

AI with guardrails and human oversight for true customer satisfaction

Uncontrolled AI is risky. That is why production systems rely on layered safeguards.

Purpose-built platforms introduce guardrails such as:

- Predefined rules that limit what the AI can say in sensitive scenarios

- Confidence thresholds that trigger escalation when the model is unsure

- Approval workflows where customer support agents review or edit responses before they are sent

This ensures that AI supports customer service agents rather than replacing judgment.

Human oversight remains central when AI answers customer inquiries. Complex or emotionally sensitive customer interactions can be handed off instantly to a customer service representative, while routine questions are handled automatically.

This hybrid approach is what allows companies to scale without sacrificing quality. Customer service teams stay in control, and AI operates within clearly defined boundaries.

Designed for scale in customer support teams, without breaking quality

Scaling support is not just about handling more tickets. It is about maintaining quality across thousands of customer interactions.

Standalone tools often break down under pressure because they lack structure. Purpose-built systems are designed to:

- Handle spikes in volume without overwhelming customer service agents

- Automate repetitive requests like order status, refunds, or FAQs

- Maintain consistent tone and accuracy across every response

They also provide visibility into performance. Customer service leaders can track metrics such as response times, resolution rates, and customer satisfaction across channels.

This makes it easier to identify bottlenecks and improve processes over time.

In fast-growing companies, this kind of infrastructure is essential. Without it, scaling support usually leads to slower responses and inconsistent experiences.

Better outcomes across the entire customer journey

When AI is properly integrated into a customer service platform, its impact goes beyond handling tickets.

It improves the entire experience by:

- Assisting customer service agents with suggested replies based on past customer interactions

- Reducing response times while keeping answers accurate and relevant

- Ensuring consistent communication across every touchpoint, from first contact to resolution

It also supports proactive engagement. Instead of waiting for issues, systems can trigger messages based on behavior, such as abandoned carts or failed payments.

Over time, this leads to stronger relationships and higher customer satisfaction. Customers get faster answers, fewer errors, and a smoother experience overall.

For businesses competing in the customer service space, this is a clear advantage.

Where this leaves ChatGPT

ChatGPT still plays a role, but not as a standalone solution or a customer-facing chatbot.

On its own, it behaves like a general-purpose customer service chatbot, which makes it unreliable for real-world use. It lacks the structure, data access, and safeguards needed for consistent performance.

Inside a purpose-built platform, it becomes far more useful. It can assist customer support agents, speed up workflows, and improve response quality, all while operating within controlled systems.

For customer service teams and customer service leaders, the takeaway is simple. AI is valuable, but only when it is implemented in a way that supports real workflows, real data, and real accountability.

Where ChatGPT actually works in customer service

While ChatGPT is not reliable as a standalone solution, it can still improve customer service when used in the right context. The key is keeping it behind the scenes, supporting people instead of replacing them.

Drafting replies for support requests

One of the most practical uses is helping customer service agents respond faster.

Instead of writing every reply from scratch, agents can use ChatGPT to:

- Generate human-like responses based on the context of the request

- Rephrase messages to match tone and clarity

- Turn rough notes into polished replies

This is especially useful when dealing with high volumes of support requests. It reduces writing time without removing human oversight.

Agents still review and edit everything, which keeps responses accurate and aligned with company policies.

Summarizing support tickets and conversations

Customer interactions often span multiple messages, channels, and agents. That makes it hard to quickly understand what is going on.

ChatGPT can:

- Summarize long support tickets into short, clear overviews

- Highlight key issues, actions taken, and next steps

- Help new agents jump into ongoing conversations without confusion

This improves handoffs between customer support agents and reduces the risk of missed details.

It also helps managers review conversations faster and spot patterns across tickets.

Handling repetitive and low-risk queries

For simple, repeatable questions, ChatGPT can assist with first-line responses.

Examples include:

- Basic product questions

- Order status updates

- Account or login guidance

These types of support requests do not usually require deep judgment. When paired with verified data sources, ChatGPT can respond quickly and consistently.

This frees up customer service agents to focus on more complex or sensitive cases, including human interactions that require empathy or decision-making.

Assisting with customer feedback analysis

Customer feedback is often scattered across emails, chats, and surveys.

ChatGPT can help by:

- Grouping feedback into themes or categories

- Identifying recurring complaints or feature requests

- Summarizing large volumes of responses into key insights

This gives customer service teams a clearer view of what customers are saying, without manually reviewing every message.

Over time, this can improve customer service by helping teams prioritize fixes and respond to common issues more effectively.

Supporting agents during difficult conversations

Handling angry customers is one of the hardest parts of the job.

ChatGPT can act as a support tool by:

- Suggesting calm, professional responses in tense situations

- Helping agents adjust tone to avoid escalation

- Providing alternative ways to explain policies or decisions

This does not replace human judgment, but it gives agents a starting point when they are under pressure.

It can be especially helpful for less experienced customer service representatives who are still learning how to manage difficult interactions.

Internal knowledge and training support

ChatGPT is also useful internally, not just in live conversations.

Teams can use it to:

- Answer internal questions about processes or policies

- Generate training materials based on past support tickets

- Help new hires learn how to handle common scenarios

This reduces the time it takes to onboard new customer support agents and ensures more consistent responses across the team.

Final thoughts: ChatGPT is a tool, not a solution

ChatGPT has earned its place in the conversation around AI, and for good reason. It can generate human-like responses, support customer service agents, and speed up how teams handle support requests.

But as you’ve seen, it breaks down in the areas that matter most for real customer interactions and more complex tickets. It lacks business context, struggles with accuracy, introduces risk around compliance and data privacy, and still depends heavily on human oversight to avoid mistakes.

That’s why more customer service leaders are moving away from standalone tools and toward purpose-built platforms like Quiq.

Quiq takes everything that makes ChatGPT useful and removes the parts that make it risky:

- Instead of guessing, it pulls from your actual data and systems

- Instead of operating alone, it works alongside customer support agents with clear guardrails

- Instead of inconsistent answers, it delivers controlled, reliable responses across every customer interaction

- Instead of adding risk, it is built for compliance, security, and real business use

The result is not just faster replies. It is better customer satisfaction, more confident customer service teams, and a system that can handle real-world complexity without falling apart.

If your goal is to improve customer service in a meaningful way, ChatGPT on its own will not get you there. But used within a platform like Quiq, it becomes part of something far more reliable, scalable, and ready for the demands of modern customer service.

Get a free demo of Quiq to find out the real capabilities of AI in CX.