Key Takeaways

- WhatsApp Business offers advanced tools for contact centers – Features like conversation labeling, data export, and language translation make it far more powerful than the personal app.

- Its global reach makes it essential for customer engagement – With 2 billion users, WhatsApp enables more personal, conversational connections at scale.

- AI integration boosts efficiency and service quality – Pairing WhatsApp Business with conversational AI streamlines routing, analytics, and agent support.

- Built for secure, scalable, and rich interactions – End-to-end encryption, media sharing, and multi-conversation capacity make it ideal for modern customer communication.

In today’s digital era, businesses continually seek innovative ways to connect with their customers, striving to enhance communication and foster deeper relationships. Enter WhatsApp Business – a game-changer in the realm of digital communication. This powerful tool is not just a messaging app; it’s a bridge between businesses and their customers, offering a plethora of features designed to streamline communication, improve customer service, and boost engagement. Whether you’re a small business owner or part of a global enterprise, understanding the potential of WhatsApp Business could redefine your approach to customer communication.

What is Whatsapp Business?

WhatsApp is an application that supports text messaging, voice messaging, and video calling for over two billion global users. Because it leverages a simple internet connection to send and receive data, WhatsApp users can avoid the fees that once made communication so expensive.

Since WhatsApp already has such a large base of enthusiastic users, many international brands have begun leveraging it to communicate with their own audiences. It also has a number of built-in features that make it an attractive option for businesses wanting to establish a more personal connection with their customers, and we’ll cover those in the next section.

What Features Does WhatsApp Business Have?

In addition to its reach and the fact that it reduces the budget needed for communication, WhatsApp Business has additional functionality that makes it ideal for any business trying to interact with its customers.

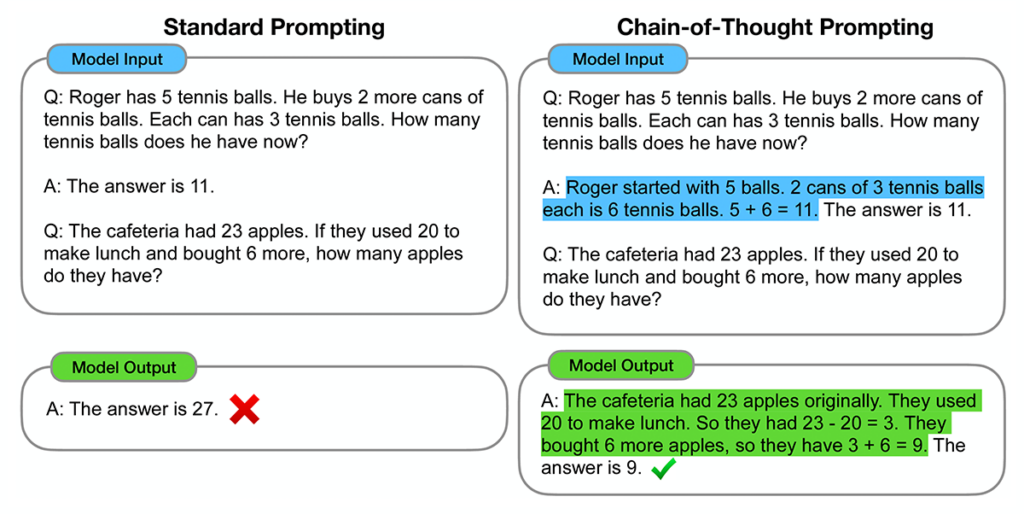

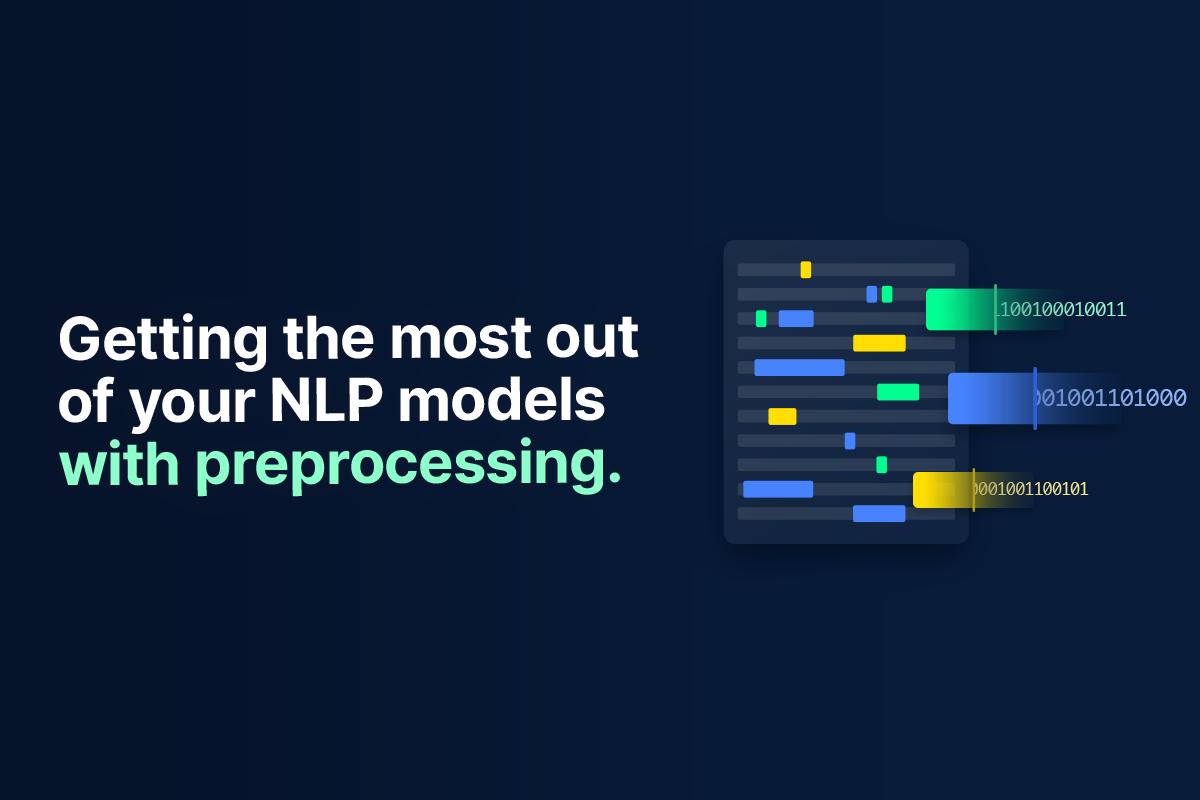

When integrated with a tool like the Quiq conversational AI platform, WhatsApp Business can automatically transcribe voice-based messages. Even better, WhatsApp Business allows you to export these conversations later if you want to analyze them with a tool like natural language processing.

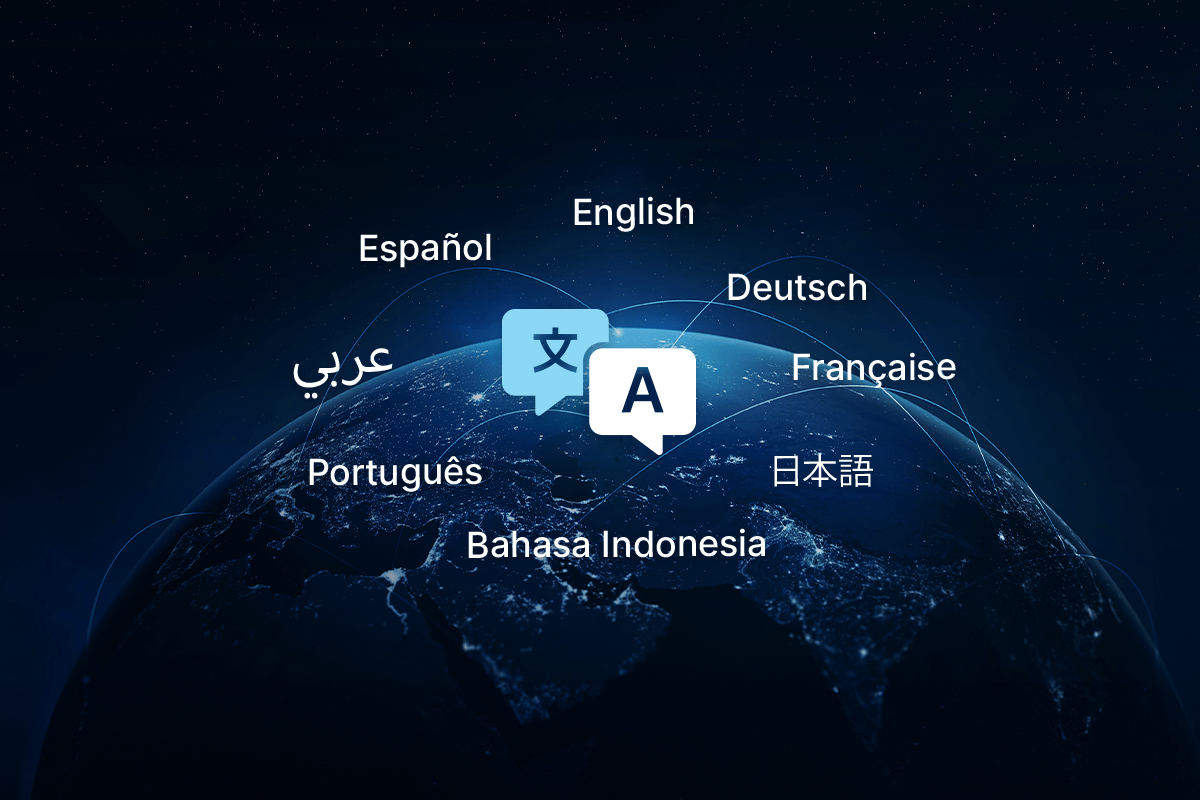

If your contact center agents and the customers they’re communicating with have both set a “preferred language,” WhatsApp can dynamically translate between these languages to make communication easier. So, if a user sends a voice message in Russian and the agent wants to communicate in English, they’ll have no trouble understanding one another.

What are the Differences Between WhatsApp and WhatsApp Business?

Before we move on, it’s worth pointing out that WhatsApp and WhatsApp Business are two different services. On its own, WhatsApp is the most widely used messaging application in the world. Businesses can use WhatsApp to talk to their customers, but with a WhatsApp Business account, they get a few extra perks.

Mostly, these perks revolve around building brand awareness. Unlike a basic WhatsApp account, a WhatsApp Business account allows you to include a lot of additional information about your company and its services. It also provides a labeling system so that you can organize the conversations you have with customers, and a variety of other tools so you can respond quickly and efficiently to any issues that come up.

The Advantages of WhatsApp Messaging for Businesses

Now, let’s spend some time going over the myriad advantages offered by a WhatsApp outreach strategy. Why, in other words, would you choose to use WhatsApp over its many competitors?

Global Reach and Popularity

First, we’ve already mentioned the fact that WhatsApp has achieved worldwide popularity, and in this section, we’ll drill down into more specifics.

When WhatsApp was acquired by Meta in 2014, it already boasted 450 million active users per month. Today, this figure has climbed to a remarkable 2.7 billion, but it’s believed it will reach a dizzying 3.14 billion as early as 2025.

With over 535 million users, India is the country where WhatsApp has gained the most traction by far. Brazil is second with 148 million users, and Indonesia is third with 112 million users.

The gender divide among WhatsApp users is pretty even – men account for just shy of 54% of WhatsApp users, so they have only a slight majority.

The app itself has over 5 billion downloads from the Google Play store alone, and it’s used to send 140 billion messages each day.

These data indicate that WhatsApp could be a very valuable channel to cultivate, regardless of the market you’re looking to serve or where your customers are located.

Personalized Customer Interactions

Firstly, platforms like WhatsApp enable businesses to customize communication with a level of scale and sophistication previously unavailable.

This customization is powered by machine learning, a technology that has consistently led the charge in the realm of automated content personalization. For example, Spotify’s ability to analyze your listening patterns and suggest music or podcasts that match your interests is powered by machine learning. Now, thanks to advancements in generative AI, similar technology is being applied to text messaging.

Past language models often fell short in providing personalized customer interactions. They tended to be more “rule-based” and, therefore, came off as “mechanical” and “unnatural.” However, contemporary models greatly improve agents’ capacity to adapt their messages to a particular situation.

While none of this suggests generative AI is going to entirely take the place of the distinctive human mode of expression, for a contact center manager aiming to improve customer experience, this marks a considerable step forward.

Below, we have a section talking a little bit more about integrating AI into WhatsApp Business.

End-to-End Encryption

One thing that has always been a selling point for WhatsApp is that it takes security and privacy seriously. This is manifested most obviously in the fact that it encrypts all messages end-to-end.

What does this mean? From the moment you start typing a message to another user all the way through when they read it, the message is protected. Even if another party were to somehow intercept your message, they’d still have to crack the encryption to read it. What’s more, all of this is enabled by default – you don’t have to spend any time messing around with security settings.

This might be more important than you realize. We live in a world increasingly beset by data breaches and ransomware attacks, and more people are waking up to the importance of data security and privacy. This means that a company that takes these aspects of its platform very seriously could have a leg up where building trust is concerned. Your users want to know that their information is safe with you, and using a messaging service like WhatsApp will help to set you apart.

Scalability

Finally, WhatsApp’s Business API is a sophisticated programmatic interface designed to scale your business’s outreach capabilities. By leveraging this tool, companies can connect with a broader audience, extending their reach to prospects and customers across various locations. This expansion is not just about increasing numbers; it’s about strategically enhancing your business’s presence in the digital world, ensuring that you’re accessible whenever your customers need to reach out to you.

By understanding the value WhatsApp’s Business API brings in reaching and engaging with more people effectively, you can make an informed decision about whether it represents the right technological solution for your business’s expansion and customer engagement strategies.

Enhancing Contact Center Performance with WhatsApp Messaging

Now, let’s turn our attention to some of the concrete ways in which WhatsApp can improve your company’s chances of success!

Improving Response and Resolution Metrics Times

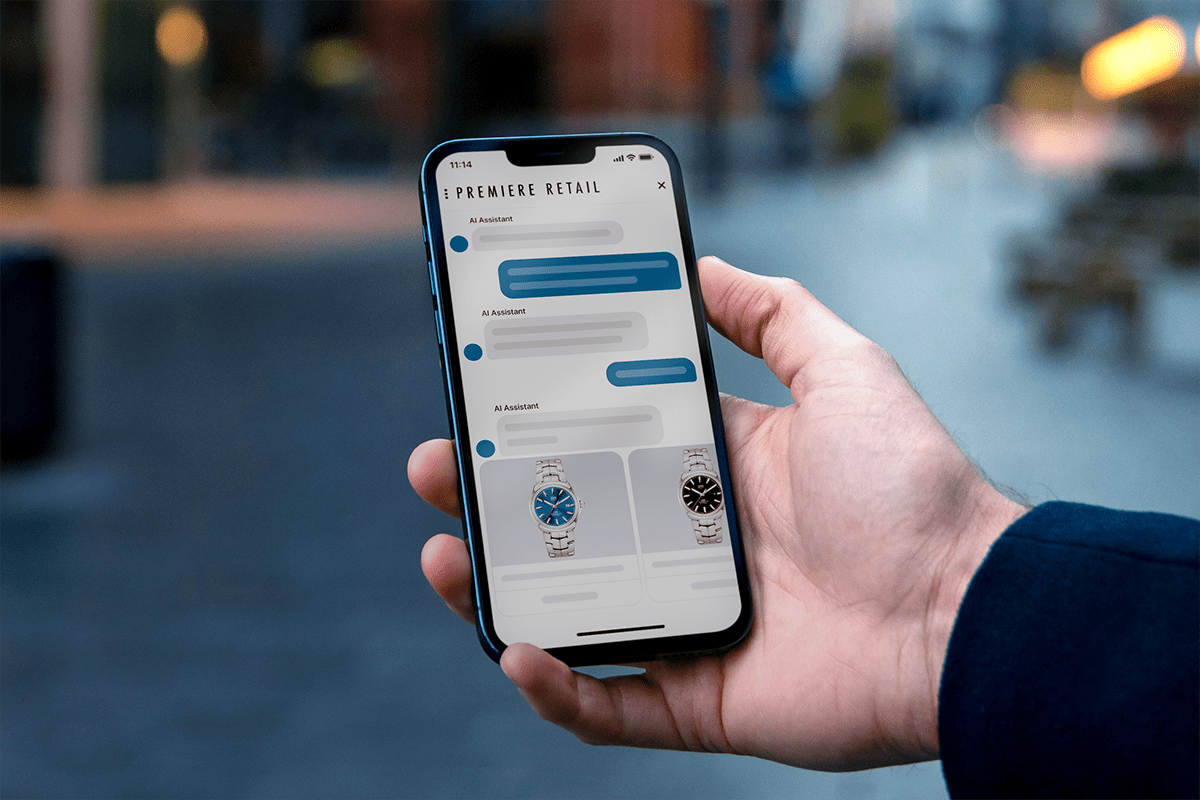

Integrating technologies like WhatsApp Business into your agent workflow can drastically improve efficiency, simultaneously reducing response times and boosting customer satisfaction. Agents often have to manage several conversations at once, and it can be challenging to keep all those plates spinning.

However, a quality messaging platform like WhatsApp means they’re better equipped to handle these conversations, especially when utilizing tools like Quiq Compose.

Additionally, less friction in resolving routine tasks means agents can dedicate their focus to issues that necessitate their expertise. This not only leads to more effective problem-solving, it means that fewer customer inquiries are overlooked or terminated prematurely.

Integrating Artificial Intelligence

According to WhatsApp’s own documentation, there’s an ongoing effort to expand the API to allow for the integration of chatbots, AI assistants, and generative AI more broadly.

Today, these technologies possess a surprisingly sophisticated ability to conduct basic interactions, answer straightforward questions, and address a wide range of issues, all of which play a significant role in boosting customer satisfaction and making agents more productive.

We can’t say for certain when WhatsApp will roll out the red carpet for AI vendors like Quiq, but if our research over the past year is any indication, it will make it dramatically easier to keep customers happy!

Gathering Customer Feedback

Lastly, an additional advantage to WhatsApp messaging is the degree to which it facilitates collecting customer feedback. To adapt quickly and improve your services, you have to know what your customers are thinking. And more specifically, you have to know the details about what they like and dislike about your product or service.

In the Olde Days (i.e. 20 years ago year, or so), the only real way to do this was by conducting focus groups, sending out surveys – sometimes through the actual mail, if you can believe it – or doing something similarly labor-intensive.

Today, however, your customers are almost certainly walking around with a smartphone that supports text messaging. And, since it’s pretty easy for them to answer a few questions or dash off a few quick lines describing their experience with your service, odds are that you can gather a great deal of feedback from them.

Now, we hasten to add that you must exercise a certain degree of caution in interpreting this kind of feedback, as getting an accurate gauge of customer sentiment is far from trivial. To name just one example, the feedback might be exaggerated in both the positive and negative direction because the people most likely to send feedback via text messaging are the ones who really liked or really didn’t like you.

That said, so long as you’re taking care to contextualize the information coming from customers, supplementing it with additional data wherever appropriate, it’s valuable to have.

Wrapping Up

From its global reach and popularity to the personalized customer interactions it facilitates, WhatsApp Business stands out as a powerful solution for businesses aiming to enhance their digital presence and customer engagement strategies. By leveraging the advanced features of WhatsApp Business, companies can avail themselves of end-to-end encryption, enjoy scalability, and improve contact center performance, thereby positioning themselves at the forefront of the contact center game.

And speaking of being at the forefront, the Quiq conversational CX platform offers a staggering variety of different tools, from AI assistants powered by language models to advanced analytics on agent performance. Check us out or schedule a demo to see what we can do for your contact center!

Frequently Asked Questions (FAQs)

What is WhatsApp Business, and how is it different from regular WhatsApp?

WhatsApp Business is designed for companies to manage customer interactions more efficiently, offering features like message templates, chat labels, and analytics that aren’t available in the standard app.

Why should contact centers use WhatsApp Business?

It allows AI agents to connect with customers on a familiar, trusted platform, streamlining communication while improving engagement and satisfaction.

Can WhatsApp Business be automated?

Yes. When integrated with an agentic AI solution like Quiq, businesses can automate FAQs, route messages intelligently, and provide instant responses around the clock.

Is WhatsApp Business secure for customer data?

Conversations are protected with end-to-end encryption, ensuring messages remain private and compliant with security standards.

What kinds of messages can businesses send on WhatsApp?

Companies can send rich messages including text, images, videos, PDFs, and interactive elements like buttons or quick replies, creating more dynamic customer experiences.

How can contact centers scale with WhatsApp Business?

Through API integration, multiple AI agents can manage conversations simultaneously, supported by automation and reporting tools to handle large volumes efficiently.