Artificial intelligence (AI) has been making remarkable strides in recent months. Owing to the release of ChatGPT in November of 2022, a huge amount of attention has been on large language models, but the truth is, there have been similar breakthroughs in computer vision, reinforcement learning, robotics, and many other fields.

In this piece, we’re going to focus on how these advances might contribute specifically to the retail sector.

We’ll start with a broader overview of AI, then turn to how AI-based tools are making it easier to make targeted advertisements, personalized offers, hiring decisions, and other parts of retail substantially easier.

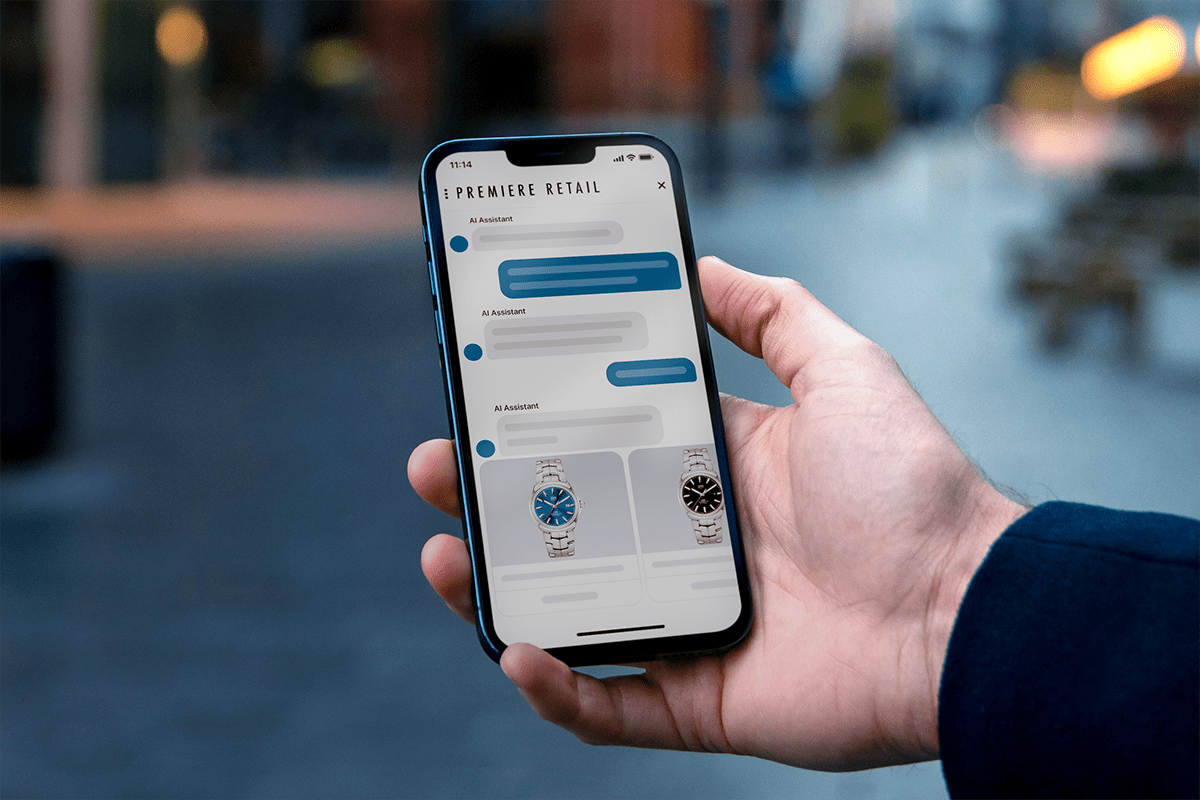

What are AI assistants in Retail?

Artificial intelligence is famously difficult to define precisely, but for our purposes, you can think of it as any attempt to get intelligent behavior from a machine. This could involve something relatively straightforward, like building a linear regression model to predict future demand for a product line, or something far more complex, like creating neural networks able to quickly spit out multiple ideas for a logo design based on a verbal description.

AI assistants are a little different and specifically require building agents capable of carrying out sequences of actions in the service of a goal. The field of AI is some 70 years old now and has been making remarkable strides over the past decade, but building robust agents remains a major challenge.

It’s anyone’s guess as to when we’ll have the kinds of agents that could successfully execute an order like “run this e-commerce store for me”, but there’s nevertheless been enough work for us to make a few comments about the state of the art.

What are the Ways of Building AI Assistants?

On its own, a model like ChatGPT can (sometimes) generate working code and (often) generate correct API calls. But as things stand, a human being still needs to utilize this code for it to do anything useful.

Efforts are underway to remedy this situation by making models able to use external tools. Auto-GPT, for example, combines an LLM and a separate bot that repeatedly queries it. Together, they can take high-level tasks and break them down into smaller, achievable steps, checking off each as it works toward achieving the overall objective.

AssistGPT and SuperAGI are similar endeavors, but they’re better able to handle “multimodal” tasks, i.e those that also involve manipulating images or sounds rather than just text.

The above is a fairly cursory examination of building AI agents, but it’s not difficult to see how the retail establishments of the future might use agents. You can imagine agents that track inventory and re-order crucial items when they get low, or that keep an eye on sales figures and create reports based on their findings (perhaps even using voice synthesis to actually deliver those reports), or creating customized marketing campaigns, generating their own text, images, and A/B tests to find the highest-performing strategies.

What are the Advantages of Using AI in Retail Business?

Now that we’ve talked a little bit about how AI and AI assistants can be used in retail, let’s spend some time talking about why you might want to do this in the first place. What, in other words, are the big advantages of using AI in retail?

1. Personalized Marketing with AI

People can’t buy your products if they don’t know what you’re selling, which is why marketing is such a big part of retail. For its part, marketing has long been a future-oriented business, interested in leveraging the latest research from psychology or economics on how people make buying decisions.

A kind of holy grail for marketing is making ultra-precise, bespoke marketing efforts that target specific individuals. The kind of messaging that would speak to a childless lawyer in a big city won’t resonate the same way with a suburban mother of five, and vice versa.

The problem, of course, is that there’s just no good way at present to do this at scale. Even if you had everything you needed to craft the ideal copy for both the lawyer and the mother, it’s exceedingly difficult to have human beings do this work and make sure it ends up in front of the appropriate audience.

AI could, in theory, remedy this situation. With the rise of social media, it has become possible to gather stupendous amounts of information about people, grouping them into precise and fine-grained market segments–and, with platforms like Facebook Ads, you can make really target advertisements for each of these segments.

AI can help with the initial analysis of this data, i.e. looking at how people in different occupations or parts of the country differ in their buying patterns. But with advanced prompt engineering and better LLMs, it could also help in actually writing the copy that induces people to buy your products or services.

And it doesn’t require much imagination to see how AI assistants could take over quite a lot of this process. Much of the required information is already available, meaning that an agent would “just” need to be able to build simple models of different customer segments, and then put together a prompt that generates text that speaks to each segment.

2. Personalized Offerings with AI

A related but distinct possibility is using AI assistants to create bespoke offerings. As with messaging, people will respond to different package deals; if you know how to put one together for each potential customer, there could be billions in profits waiting for you. Companies like Starbucks have been moving towards personalized offerings for a while, but AI will make it much easier for other retailers to jump on this trend.

We’ll illustrate how this might work with a fictional example. Let’s say you’re running a toy company, and you’re looking at data for Angela and Bob. Angela is an occasional customer, mostly making purchases around the holidays. When she created her account she indicated that she doesn’t have children, so you figure she’s probably buying toys for a niece or nephew. She’s not a great target for a personalized offer, unless perhaps it’s a generic 35% discount around Christmas time.

Bob, on the other hand, buys fresh trainsets from you on an almost weekly basis. He more than likely has a son or daughter who’s fascinated by toy machines, and you have customer-recommendation algorithms trained on many purchases indicating that parents who buy the trains also tend to buy certain Lego sets. So, next time Bob visits your site, your AI assistant can offer him a personalized discount on Lego sets.

Maybe he bites this time, maybe he doesn’t, but you can see how being able to dynamically create offerings like this would help you move inventory and boost individual customer satisfaction a great deal. AI can’t yet totally replace humans in this kind of process, but it can go a long way toward reducing the friction involved.

3. Smarter Pricing

The scenario we just walked through is part of a broader phenomenon of smart pricing. In economics, there’s a concept known as “price discrimination”, which involves charging a person roughly what they’re willing to pay for an item. There may be people who are interested in buying your book for $20, for example, but others who are only willing to pay $15 for it. If you had a way of changing the price to match what a potential buyer was willing to pay for it, you could make a lot more money (assuming that you’re always charging a price that at least covers printing and shipping costs).

The issue, of course, is that it’s very difficult to know what people will pay for something–but with more data and smarter AI tools, we can get closer. This will have the effect of simultaneously increasing your market (by bringing in people who weren’t quite willing to make a purchase at a higher price) and increasing your earnings (by facilitating many sales that otherwise wouldn’t have taken place).

More or less the same abilities will also help with inventory more generally. If you sell clothing you probably have a clearance rack for items that are out of season, but how much should you discount these items? Some people might be fine paying almost full price, while others might need to see a “60%” off sticker before moving forward. With AI, it’ll soon be possible to adjust such discounts in real-time to make sure you’re always doing brisk business.

4. AI and Smart Hiring

One place where AI has been making real inroads is in hiring. It seems like we can’t listen to any major podcast today without hearing about some hiring company that makes extensive use of natural language processing and similar tools to find the best employees for a given position.

Our prediction is that this trend will only continue. As AI becomes increasingly capable, eventually it will be better than any but the best hiring managers at picking out talent; retail establishments, therefore, will rely on it more and more to put together their sales force, design and engineering teams, etc.

Is it Worth Using AI in Retail?

Throughout this piece, we’ve sung the praises of AI in retail. But the truth is, there are still questions about how much sense it makes to leverage retail at the moment, given its expense and risks.

In this section, we’ll briefly go over some of the challenges of using AI in retail so you can have a fuller picture of how its advantages compare to its disadvantages, and thereby make a better decision for your situation.

The one that’s on everyone’s minds these days is the tendency of even powerful systems like ChatGPT to hallucinate incorrect information or to generate output that is biased or harmful. Finetuning and techniques like retrieval augmented generation can mitigate this somewhat, but you’ll still have to spend a lot of time monitoring and tinkering with the models to make sure that you don’t end up with a PR disaster on your hands.

Another major factor is the expense involved. Training a model on your own can cost millions of dollars, but even just hiring a team to manage an open-source model will likely set you back a fair bit (engineers aren’t cheap).

By far the safest and easiest way of testing out AI for retail is by using a white glove solution like the Quiq conversational CX platform. You can test out our customer-facing and agent-facing AI tools while leaving the technical details to us, and at far less expense than would be involved in hiring engineering talent.

Set up a demo with us to see what we can do for you.

AI is Changing Retail

From computer-generated imagery to futuristic AI-based marketing plans, retail won’t be the same with the advent of AI. This will be especially true once we have robust AI assistants able to answer customer questions, help them find clothes that fit, and offer precision discounts and offerings tailored to each individual shopper.

If you don’t want to get left behind, you’ll need to begin exploring AI as soon as possible, and we can help you do that. Check out our product or find a time to talk with us, today!