Along with almost everyone else, we’ve been singing the praises of large language models like ChatGPT for a while now. We’ve noted how they can be used in retail, how they’re already supercharging contact center agents, and have even put out some content on how researchers are pushing the frontiers of what this technology is capable of.

But none of this is to say that generative AI doesn’t come with serious concerns for security and compliance. In this article, we’ll do a deep dive into these issues. We’ll first provide some context on how advanced AI is being deployed in contact centers, before turning our attention to subjects like data leaks, lack of transparency, and overreliance. Finally, we’ll close with a treatment of the best practices contact center managers can use to alleviate these problems.

What is a “Next-Gen” Contact Center?

First, what are some ways in which a next-generation contact center might actually be using AI? Understanding this will be valuable background for the rest of the discussion about security and compliance, because knowing what generative AI is doing is a crucial first step in protecting ourselves from its potential downsides.

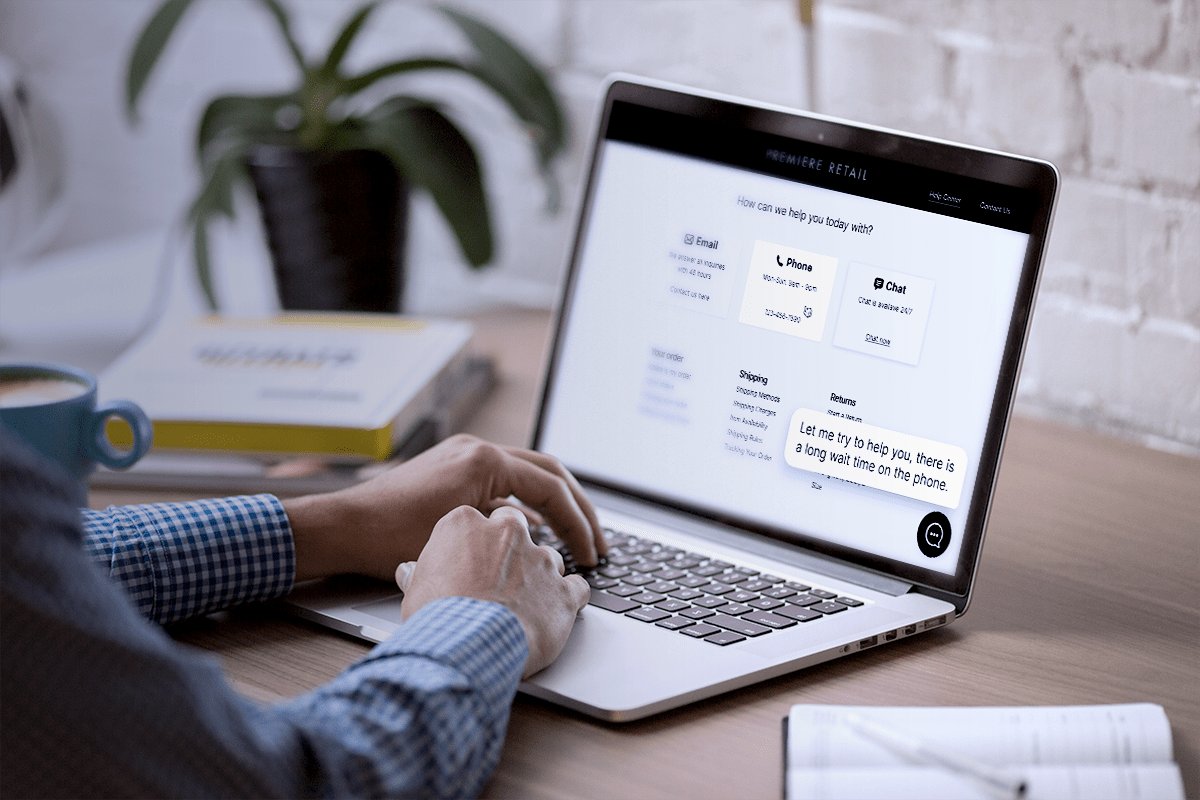

Businesses like contact centers tend to engage in a lot of textual communication, such as when resolving customer issues or responding to inquiries. Due to their proficiency in understanding and generating natural language, LLMs are an obvious tool to reach for when trying to automate or streamline these tasks; for this reason, they have become increasingly popular in enhancing productivity within contact centers.

To give specific examples, there are several key areas where contact center managers can effectively utilize LLMs:

Responding to Customer Queries – High-quality documentation is crucial, yet there will always be customers needing assistance with specific problems. While LLMs like ChatGPT may not have all the answers, they can address many common inquiries, particularly when they’ve been fine-tuned on your company’s documentation.

Facilitating New Employee Training – Similarly, a language model can significantly streamline the onboarding process for new staff members. As they familiarize themselves with your technology and procedures, they may encounter confusion, where AI can provide quick and relevant information.

Condensing Information – While it may be possible to keep abreast of everyone’s activities on a small team, this becomes much more challenging as the team grows. Generative AI can assist by summarizing emails, articles, support tickets, or Slack threads, allowing team members to stay informed without spending every moment of the day reading.

Sorting and Prioritizing Issues – Not all customer inquiries or issues carry the same level of urgency or importance. Efficiently categorizing and prioritizing these for contact center agents is another area where a language model can be highly beneficial. This is especially so when it’s integrated into a broader machine-learning framework, such as one that’s designed to adroitly handle classification tasks.

Language Translation – If your business has a global reach, you’re eventually going to encounter non-English-speaking users. While tools like Google Translate are effective, a well-trained language model such as ChatGPT can often provide superior translation services, enhancing communication with a diverse customer base.

What are the Security and Compliance Concerns for AI?

The preceding section provided valuable context on the ways generative AI is powering the future of contact centers. With that in mind, let’s turn to a specific treatment of the security and compliance concerns this technology brings with it.

Data Leaks and PII

First, it’s no secret that language models are trained on truly enormous amounts of data. And with that, there’s a growing worry about potentially exposing “Personally Identifiable Information” (PII) to generative AI models. PII encompasses details like your actual name, residential address, and also encompasses sensitive information like health records. It’s important to note that even if these records don’t directly mention your name, they could still be used to deduce your identity.

While our understanding of the exact data seen by language models during their training remains incomplete, it’s reasonable to assume they’ve encountered some sensitive data, considering how much of that kind of data exists on the internet. What’s more, even if a specific piece of PII hasn’t been directly shown to an LLM, there are numerous ways it might still come across such data. Someone might input customer data into an LLM to generate customized content, for instance, not recognizing that the model often permanently integrates this information into its framework.

Currently, there’s no effective method to extract data from a language model, and no finetuning technique that ensures it never reveals that data again has yet been found.

Over-Reliance on Models

Are you familiar with the term “ultracrepidarianism”? It’s a fancy SAT word that refers to a person who consistently gives advice or expresses opinions on things that they simply have no expertise in.

A similar sort of situation can arise when people rely too much on language models, or use them for tasks that they’re not well-suited for. These models, for example, are well known to hallucinate (i.e. completely invent plausible-sounding information that is false). If you were to ask ChatGPT for a list of 10 scientific publications related to a particular scientific discipline, you could well end up with nine real papers and one that’s fabricated outright.

From a compliance and security perspective, this matters because you should have qualified humans fact-checking a model’s output – especially if it’s technical or scientific.

To concretize this a bit, imagine you’ve finetuned a model on your technical documentation and used it to produce a series of steps that a customer can use to debug your software. This is precisely the sort of thing that should be fact-checked by one of your agents before being sent.

Not Enough Transparency

Large language models are essentially gigantic statistical artifacts that result from feeding an algorithm huge amounts of textual data and having it learn to predict how sentences will end based on the words that came before.

The good news is that this works much better than most of us thought it would. The bad news is that the resulting structure is almost completely inscrutable. While a machine learning engineer might be able to give you a high-level explanation of how the training process works or how a language model generates an output, no one in the world really has a good handle on the details of what these models are doing on the inside. That’s why there’s so much effort being poured into various approaches to interpretability and explainability.

As AI has become more ubiquitous, numerous industries have drawn fire for their reliance on technologies they simply don’t understand. It’s not a good look if a bank loan officer can only shrug and say “The machine told me to” when asked why one loan applicant was approved and another wasn’t.

Depending on exactly how you’re using generative AI, this may not be a huge concern for you. But it’s worth knowing that if you are using language models to make recommendations or as part of a decision process, someone, somewhere may eventually ask you to explain what’s going on. And it’d be wise for you to have an answer ready beforehand.

Compliance Standards Contact Center Managers Should be Familiar With

To wrap this section up, we’ll briefly cover some of the more common compliance standards that might impact how you run your contact center. This material is only a sketch, and should not be taken to be any kind of comprehensive breakdown.

The General Data Protection Regulation (GDPR) – The famous GDPR is a set of regulations put out by the European Union that establishes guidelines around how data must be handled. This applies to any business that interacts with data from a citizen of the EU, not just to companies physically located on the European continent.

The California Consumer Protection Act (CCPA) – In a bid to give individuals more sovereignty over what happens to their personal data, California created the CCPA. It stipulates that companies have to be clearer about how they gather data, that they have to include privacy disclosures, and that Californians must be given the choice to opt out of data collection.

Soc II – Soc II is a set of standards created by the American Institute of Certified Public Accounts that stresses confidentiality, privacy, and security with respect to how consumer data is handled and processed.

Consumer Duty – Contact centers operating in the U.K. should know about The Financial Conduct Authority’s new “Consumer Duty” regulations. The regulations’ key themes are that firms must act in good faith when dealing with customers, prevent any foreseeable harm to them, and do whatever they can to further the customer’s pursuit of their own financial goals. Lawmakers are still figuring out how generative AI will fit into this framework, but it’s something affected parties need to monitor.

Best Practices for Security and Compliance when Using AI

Now that we’ve discussed the myriad security and compliance concerns facing contact centers that use generative AI, we’ll close by offering advice on how you can deploy this amazing technology without running afoul of rules and regulations.

Have Consistent Policies Around Using AI

First, you should have a clear and robust framework that addresses who can use generative AI, under what circumstances, and for what purposes. This way, your agents know the rules, and your contact center managers know what they need to monitor and look out for.

As part of crafting this framework, you must carefully study the rules and regulations that apply to you, and you have to ensure that this is reflected in your procedures.

Train Your Employees to Use AI Responsibly

Generative AI might seem like magic, but it’s not. It doesn’t function on its own, it has to be steered by a human being. But since it’s so new, you can’t treat it like something everyone will already know how to use, like a keyboard or Microsoft Word. Your employees should understand the policy that you’ve created around AI’s use, should understand which situations require human fact-checking, and should be aware of the basic failure modes, such as hallucination.

Be Sure to Encrypt Your Data

If you’re worried about PII or data leakages, a simple solution is to encrypt your data before you even roll out a generative AI tool. If you anonymize data correctly, there’s little concern that a model will accidentally disclose something it’s not supposed to down the line.

Roll Your Own Model (Or Use a Vendor You Trust)

The best way to ensure that you have total control over the model pipeline – including the data it’s trained on and how it’s finetuned – is to simply build your own. That being said, many teams will simply not be able to afford to hire the kinds of engineers who are equal to this task. In such case, you should utilize a model built by a third party with a sterling reputation and many examples of prior success, like the Quiq platform.

Engage in Regular Auditing

As we mentioned earlier, AI isn’t magic – it can sometimes perform in unexpected ways, and its performance can also simply degrade over time. You need to establish a practice of regularly auditing any models you have in production to make sure they’re still behaving appropriately. If they’re not, you may need to do another training run, examine the data they’re being fed, or try to finetune them.

Futureproofing Your Contact Center Security

The next generation of contact centers is almost certainly going to be one that makes heavy use of generative AI. There are just too many advantages, from lower average handling time to reduced burnout and turnover, to forego it.

But doing this correctly is a major task, and if you want to skip the engineering hassle and headache, give the Quiq conversational AI platform a try! We have the expertise required to help you integrate a robust, powerful generative AI tool into your contact center, without the need to write a hundred thousand lines of code.